What is the Most Useful Software in Chemical Engineering?

The List of Most Important Calculation Tools

Ivana Lukec, PhD ( 26.03.2019)

The field of chemical engineering is in constant change, so are available calculation tools and software packages. In fast everyday life, it is a considerable challenge for a chemical engineer to know which tool can serve best for solving a certain problem.

The different packages can be applied to solve typical problems in mass and energy balance, fluid mechanics, heat and mass transfer, unit operations, reactor engineering, and process and equipment design and control.

In this article, we highlight the most important tools and packages with their capabilities, based on the available professional experience of an author, available literature and discussions.

The Figure below summarizes the most useful software packages in chemical engineering:

So, let’s start from the beginning…

General Software for Mathematical Modeling

MS Excel®

It is a known fact that Microsoft Office Excel is a spreadsheet application that features calculation, graphing tools, tables, and a macro programming language – Visual Basic. The main advantage of Excel is that it is available and is widely used in industry and academia. Thus, it is a perfect tool or interface not only to perform calculations but also to connect different software so that the end user can interact with Excel, and behind the scenes, other software such as CHEMCAD, MATLAB etc. is running and reporting the results back to Excel.

It is best used for:

- Built-In functions & formulas – there are a large number of built-in functions defined, such as statistics (MEAN, AVERAGE, t-test), algebraic (SUM, ROUND, LOG, LOG10), logical (IF, FALSE, etc.), reference, database, and information. Those are easy to use in different kinds of formulas.

- Operations with columns and rows – it is easy to find & sort data and use them in replicated formulas etc.

- Plotting – there is a large number of options depending on the needs

- Solver – It is the tool to use within Excel to solve numerically a set of equations, problem optimization including fitting a set of data to a given linear and nonlinear equation and more. Solver is an add-in that needs to be activated to be used.

- Building functions in Visual Basic for Applications – Excel has built-in capability to generate customized functions using Visual Basic for Applications (VBA). This is a powerful tool that can save time for you without becoming an expert in programming as it opens the possibilities to run loops and conditionals on the background. This capability also allows the user to build relatively large equations that are used in several areas of the worksheet (e.g., polynomials for the esti¬mation of specific heat of components) and allows the user to read the calculations easily when looking at the formulas in the cells.

- Link Excel with other software – Excel has become a standard package so that a number of other specialized software use it as a source of information to report data since it is more user-friendly. Therefore, we can use the information in Excel to be loaded in MATLAB, Hysys or CHEMCAD or transferred back to Excel.

Mathworks MATLAB®

MATLAB is one of the most used software packages in engineering in general and also in chemical engineering. Much has been written about this popular software, more than 1500 books serving more than 1 million users.

MATLAB is a programming language. Its operation is based on the use of .m files that can be divided in two classes, scripts and functions. A script is basically a number of operations that we want to perform in a certain sequence. Functions are a particular type of scripts that must begin with the word “function” at the top of them. Functions can be user-defined or typical operations such as equation solving or differential equations. Within MATLAB, we have all the algebraic, statistical functions predefined along with plotting capabilities.

MATLAB has a number of functions that allow solving linear and nonlinear equations (fzero: for one variable alone, fsolve), optimizing a function (fmincon: constrained optimization, linprog: linear programming; fminin or fminsearch: unconstrained optimization; bintprog: binary and integer optimization), and solving differential equations (ode__) or partial differential equations (pdepe).

Some examples of how MATLAB can be used in chemical engineering include:

- Momentum, Mass, and Energy Transfer – There are a number of examples in the transport phenomena field that, even though represent different phenomena, they can be mathematically described using a partial differential equation, the “pdepe” toolbox.

- Distillation Column Operation – McCabeMethod – typical shortcut approach for the initial conceptual estimation of the operation of binary distillation columns

- Modeling of different kinds of process equipment – heat exchangers, pumps, valves, evaporators, columns, reactors etc.

- Reactor design – The models are based on explicit algebraic equations and differential equations. Thus, we use ODEXX function in MATLAB to solve the concentration, temperature, and/or pressure profiles along the operation of such equipment.

- Control loops analysis, control design and tuning.

Mathworks Simulink®

Simulink® (Simulation and Link ) is a software add-on to MATLAB based on the concept of block diagrams that are common in the control engineering areas. It is an environment for dynamic simulation and process control. Each of the blocks can contain a subsystem inside, which is helpful for big problems. We only need to select a number of blocks and with the right button of the mouse, click and select create subsystem.

Simulink is easier to used for engineers because it does not require any programming skills, therefore models can be build using blocks instead of defining functions.

Process Simulators

The simulation, design, and optimization of a chemical process plant, which comprises several processing units interconnected by process streams, are the core activities in process engineering. These tasks require performing material and energy balancing, equipment sizing, and costing calculation. A computer package that can accomplish these duties is known as a computer-aided process design package or simply a process simulator.

The process simulation market underwent severe transformations in the 1985–1995 decade. Relatively few systems have survived and they inclide: CHEMCAD, Aspen Plus, Aspen HYSYS, PRO/II, ProSimPlus, SuperPro Designer, and gPROMS.

Chemstations CHEMCAD

CHEMCAD is Chemstations’ software suite for process simulation. Features include process development, equipment design, equipment sizing, thermophysical property calculations, dynamic simulations, process intensification studies, energy efficiency/optimization, data reconciliation, process economics, troubleshooting/process improvement, Microsoft Visual Basic etc.

The CHEMCAD suite includes six products that can be purchased individually or bundled as needed for specific industries, projects, and processes.

- CC – steady state simulations of continuous chemical processes, features libraries of chemical components, thermodynamic methods, and unit operations, enabling you to simulate processes from lab scale to full scale. Ideal for Users who want to design processes, or rate existing processes, in steady state.

- CC – dynamics is used to conduct dynamic flowsheet analysis, operability check-out, PID loop tuning, operator training, online process control and soft sensor functionality. Ideal for users who want to design or rate dynamic processes.

- CC-THERM is used for sizing heat exchangers, covers shell-and-tube, plate-and-frame, air-cooled, and double-pipe exchangers. Rigorous designs are based on physical property and phase equilibria data.

- CC-BATCH allows you to design or rate a batch distillation column.

- CC-SAFETY NET – used for analysis of any pipe network with the piping and safety relief network simulation software.

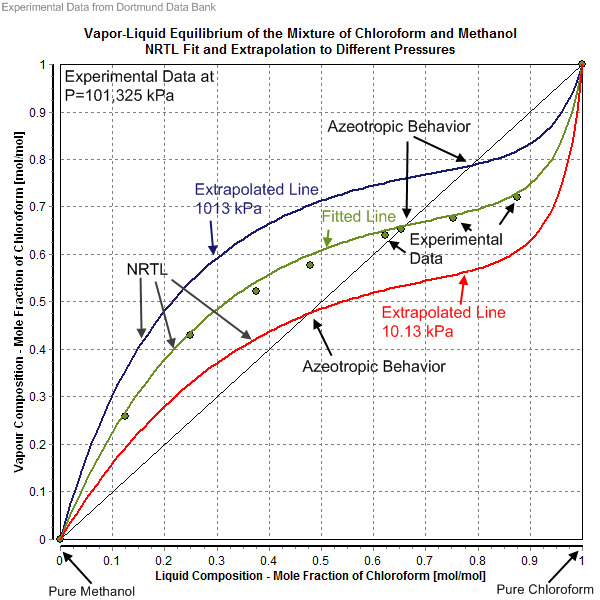

- CC – FLASH – Used to calculate physical properties and phase equilibria (VLE, LLE, VLLE) for pure components and mixtures with incredible accuracy. All products within the CHEMCAD suite feature CC-FLASH capabilities.

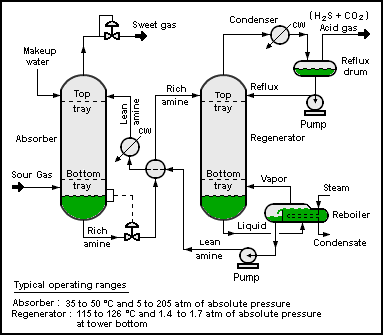

ASPEN HYSYS & ASPEN PLUS

Two similar software packages with all the functionalities that process simulator should have are also the most widespread among chemical engineers. AspenTech has a wide portfolio of modeling tools, among them most important and most known are process simulation tools Aspen Hysys and Aspen Plus.

Aspen HYSYS (or simply HYSYS) is a chemical process simulator used to mathematically model chemical processes, from unit operations to full chemical plants and refineries. HYSYS is able to perform many of the core calculations of chemical engineering, including those concerned with mass balance, energy balance, vapor-liquid equilibrium, heat transfer, mass transfer, chemical kinetics, fractionation, and pressure drop. HYSYS is used extensively in industry and academia for steady-state and dynamic simulation, process design, performance modeling, and optimization.

Aspen Plus is a process modeling tool for conceptual design, optimization, and performance monitoring for the chemical, polymer, specialty chemical, metals and minerals, and coal power industries. It can also be used for mass and energy balances, physical chemistry, thermodynamics, chemical reaction engineering, unit operations, process design and process control.

In general, it can be said that Aspen Plus is better tool for chemical process design such as fine chemistry, chemicals, pharma, etc., whilst HYSYS is best for hydrocarbon, petrochemical, petroleum operations such as natural gas, liquified gases, crude oil etc…

Specialized Software

Computational Fluid Dynamics

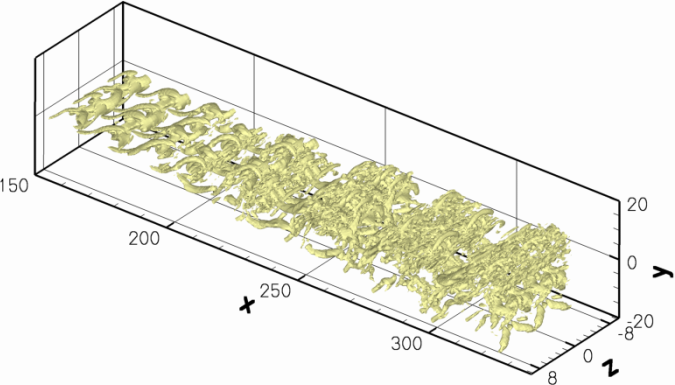

Computational fluid dynamics, known as CFD, is the numerical method of solving mass, momentum, energy, and species conservation equations and related phenomena on computers by using programming languages.

CFD and multiphysics modeling and simulation can be applied to many science and engineering disciplines. The main areas in chemical engineering are the following:

- Combustion processes,

- Food process engineering,

- Fuel cells, batteries, and supercapacitors,

- Microfluidic flows and devices,

- Pipe flows and mixing,

- Reaction engineering.

The basics of CFD are partial differential equations and thus knowledge of numerical mathematics is essential to solve them with appropriate numerical technique.

Since these conservation equations are designed and solved on computers, knowledge of programming languages, such as FORTRAN, C++, Java, or MATLAB is equally important.

CFD-based software modeling tools, popular in scientific and engineering communities, are ANSYS CFX, ANSYS Fluent, ANSYS Multiphysics, COMSOL Multiphysics, FLOW-3D, STAR-CD and STAR-CCM+, and an open-source software tool OpenFOAM. Other CFD-based software tools, such as AVL FIRE or ANSYS Polyflow, are also available on the market, but they are specialized for particular physical systems, such as internal combustion engines, power trains, polymers, glass, metals, and cement process technologies.

The most widely used commercial software tools, such as ANSYS Fluent, STAR-CD, and STAR-CCM+, are based on finite volume method, whereas ANSYS CFX uses finite element-based control volume method. On the other hand, COMSOL Multiphysics is based on finite element method.

Review of open source process simulators

It is not always necessary to use only expensive simulation packages

SimulateLive.com 07.04.2018.

One of the most important reasons that process simulation is not used more across the industry is the price of the simulation packages. Some of those most used software packages come together with the price reaching tens of thousands of USD for one license. Although, this price can be easily justified with the benefits achieved, it still very often remains an obstacle, especially for small engineering companies. Thanks to the hard-working and generous groups of experts who share their knowledge with all of us, this article presents some of the available open source process simulators. However, they are not allowed to be used commercially.

1. DWSIM

DWSIM is a software for modeling, simulation, and optimization of steady-state chemical processes. It is the most popular open source simulation software that can be used for Windows, Linux and Mac OS X. It is written in Visual Basic and features a comprehensive set of unit operations, advanced thermodynamic models, support for reacting systems, petroleum characterization tools, and a fully-featured graphical interface. Definitely should be one of the solutions on the top of your list if you are new in the process simulation or would like to get the results for a less complex process problem. It has the great number of important features, most of those that we are used to from using standard simulator packages. Some of them are:

- VLE, VLLE, SLE and Aqueous Electrolyte calculations using Equation of State, Activity Coefficient and Chao-Seader models,

- Supports CAPE-OPEN Unit Operations and Thermo 1.0/1.1 Property Packages,

- Exposes Property Packages as CAPE-OPEN 1.1 Thermodynamic Equilibrium and Property Calculators,

- Supports ChemSep’s Component Database and Column Model,

- Process Flowsheet Diagram (PFD) Drawing Interface,

- Rigorous Distillation/Absorption Column models,

- Support for Chemical Reactions and Reactors,

- Characterization of Petroleum Fractions using bulk properties and/or ASTM/TBP, distillation curves and creation of Hypothetical Components using UNIFAC groups,

- Multivariate Optimization and Sensitivity Analysis utility,

- Excel Interface for Thermodynamic Calculations,

- Standalone Thermodynamics Library,

- Component Creator Utility for user-defined components.

One of our future articles will bring the analysis of some simple calculations using DWSIM.

Until then, you can find out more about DWSIM and download the software here: https://sourceforge.net/projects/dwsim/files/?source=navbar

2. COCO simulator

The other process simulator that comes with the bunch of features is called COCO. Behind this exotic name is another free-of-charge process simulator, non-commercial, graphical, modular and CAPE-OPEN compliant simulator for steady-state and sequential simulation process modeling. It was originally intended as a test environment for CAPE-OPEN modeling tools but now provides a free chemical process simulation for students. It is an open flowsheet modeling environment allowing anyone to add new unit operations or thermodynamics packages.

A list of features includes:

- Thermodynamics for Engineering Applications,

- the CAPE-OPEN Unit-operations Simple package is shipped with COCO. It contains a splitter, a mixer, heat exchangers, pumps and reactors amongst other unit operations.

- Reaction Numerics package.

The free package comes with certain limitations in calculations, such as limitation to 40 compounds, but can definitely be used for some simplified or short-cut modeling. What is especially beneficial is the collection of free sample flow sheets available for download at their web-site: https://www.cocosimulator.org/index_sample.html

Sample flow sheets include prepared examples such as:

- Pressure swing azeotropic distillation of methanol and acetone,

- Benzene-toluene-xylene divided wall column,

- Methanol synthesis from syngas,

- Combined heat and power cycle.

To download the software, follow this link: https://www.cocosimulator.org/index_download.html

3. OPENMODELICA

OPENMODELICA is an open-source Modelica-based modeling and simulation environment intended for industrial and academic usage. Its long-term development is supported by a non-profit organization – the Open Source Modelica Consortium (OSMC). Modelica is not a process oriented simulator such as DWSIM, but a general modeling tool and the platform closer to Matlab.

OpenModelica is a comprehensive compilation and simulation environment based on free software distributed in binary and source code form for research, teaching, and industrial usage.

There is a long list of industrial and university members including ABB, Siemens, Evonik etc.

Modeling using OpenModelica enables:

- Multi-domain modeling

- Hybrid modeling

- Visual component modeling etc.

One example of how to use OpenModelica is ABB OPTIMAX® model that provides advanced model-based control products for power generation and water utilities. Plant models are typically formulated in Modelica and deployed through FMI 2.0. The optimizing control applications maximize the efficiency and provide more flexibility to large conventional power plants that face frequent load ramps and start-ups. Moreover, they aggregate small renewable units to large virtual power plants. This enables renewables to provide grid services like power/frequency control, achieving grid stability despite of high penetration of renewable power and raising revenues. ABB uses several compatible Modelica tools, including OpenModelica, depending on specific application needs. OpenModelica provides debugging features that help to save a lot of time during model development.

To download OpenModelica software, please follow this link:

https://openmodelica.org/download/download-windows

Complete List of Process Simulators

Review of simulation software for industrial plants

SimulateLive.com 22.05.2017.

Steady-state and dynamic plant simulation are powerful tools that help engineers create optimal process designs to analyze plant operations, to develop performance improvement strategies, monitor and optimize operations and much more.

We are providing a full list of process simulator packages with their key characteristics. They are available for different industries, purposes, scales and under different commercial conditions. While some of them are very expensive, there are as well affordable ones and even a couple of them that are totally free of charge. So, no more excuses for not using process simulation tools. On this list, there will definitely be something for you:

Aspen Plus

Developer: AspenTech

On the market: commercial

Main Features:

Aspen Plus is one of the most known process simulators in industry and also one of the most expensive ones. It enables a wide range of calculation possibilities for the design, operation, and optimization of safe, profitable manufacturing facilities. It enables the steady-state and dynamic simulation of petrochemical, chemical and pharmaceutical processes, including non-ideal, electrolytic, and solid systems. Mixed solution methodologies can be used to achieve fast calculation and provide full specification flexibility. Leverage modeling investments by scaling from single models to full facility flowsheets.

CADsim Plus

Developer: Aurel Systems Inc.

On the market: commercial, flexible licensing

Main Features:

CADSIM Plus is chemical process simulation software that can perform mass and energy balances and simulate dynamic conditions. It is a first-principles dynamic chemical process simulator and a full-featured Computer Assisted Drawing (CAD) front-end in one package. CADSIM Plus includes a comprehensive set of generic process modules and has a number of optional module libraries for various applications. CADSIM Plus can also be used to develop complex dynamic simulations with control logic and batch operations.

Chemcad

Developer: Chemstations Inc.

On the market: commercial

Main Features:

Chemical process simulation software that includes libraries of chemical components, thermodynamic methods, and unit operations to allow steady-state and dynamic simulation of continuous chemical processes from lab scale to full scale. Ideal for users who want to design processes, or rate existing processes, in steady state. Dynamic process simulation software that takes steady-state simulations to the next level of fidelity to allow dynamic analysis of flowsheets. The possibilities are endless: operability check-out, PID loop tuning, operator training, even online process control and soft sensor functionality and is ideal for users who want to design or rate dynamic processes.

ChromWorks

Developer: YPSO Facto

On the market: commercial

Main Features:

YPSO Facto is chromatographic process simulation software and allows a rational use of experimental data and simulation of standard single columns as well as complex continuous multi-column processes. A software package for the simulation of Ion Exchange processes allows simulating very different situations ranging from amino acids purification, organic acid recovery or hydrometallurgy.Based on proven technical considerations and complemented by a cost evaluation module, this powerful and user-friendly simulation tool is designed to match the approach and needs of chemists and biochemists.

COCO

Developer: AmsterCHEM

On the market: open source

Main Features:

COCO is a cape open to cape open simulation environment with modules presented with interesting names such as: COFE – the CAPE-OPEN Flowsheet Environment is an intuitive graphical user interface to chemical flowsheeting. COFE displays properties of streams, deals with unit-conversion and provides plotting facilities.

TEA – COCO’s Thermodynamics for Engineering Applications, is based on the code of the thermodynamic library of ChemSep and includes a data bank of over 430 commonly used chemicals. The package exhibits more than 100 property calculation methods with their analytical or numerical derivatives.

COUSCOUS – the CAPE-OPEN Unit-operations Simple package is shipped with COCO. It contains a splitter, a mixer, heat exchangers, pumps and reactors amongst other unit operations. ChemSep-LITE, a limited version of ChemSep with a maximum of 40 compounds and 300 stages, can serve as an equilibrium distillation unit operation in COCO.

Simulation package can be downloaded here.

Design II for Windows

Developer: WinSim Inc.

On the market: commercial

Main Features:

Design II performs complete heat and material balance calculations for a wide variety of pipeline and processing applications. The simulator’s easy-to-create flowsheets allow process engineers to concentrate on engineering, rather than computer operations. A minimum amount of input is required to utilize DESIGN II FOR WINDOWS. WinSim’s simulator features such as sizing and rating of heat exchangers and separators, within the flowsheet. The DESIGN II FOR WINDOWS database contains 1,200+ pure components, and others can be added via CHEMTRAN. Also included is a crude library with 38 world crudes, already characterized.

DWSim

Developer: Daniel Medeiros

On the market: open source

Main Features:

DWSIM is an open source, CAPE-OPEN compliant chemical process simulator for Windows and Linux systems. Written in VB.NET and C#, DWSIM features a comprehensive set of unit operations, advanced thermodynamic models, support for reacting systems, petroleum characterization tools and a fully-featured graphical interface. Some of the features are VLE, VLLE and SLE calculations using equation of state, supports CAPE-OPEN Unit Operations and Thermo 1.0/1.1 Property Packages, supports ChemSep’s Component Database and Column Model, Process Flowsheet Diagram (PFD) Drawing Interface, Rigorous Distillation/Absorption Column models, Support for Chemical Reactions and Reactors, Characterization of Petroleum Fractions using bulk properties and/or ASTM/TBP distillation curves and creation of Hypothetical Components using UNIFAC groups, Multivariate Optimization and Sensitivity Analysis utility, Excel Interface for Thermodynamic Calculations and more…

Download available here.

DynoChem

Developer: Scale-up Systems

On the market: commercial

Main Features:

DynoChem is process development and scale-up software for scientists and engineers working in the pharmaceutical industry. DynoChem has been used in Big Pharma for over a decade. Companies deploy the software at R&D sites and Primary Manufacturing facilities globally for routine use by scientists and engineers to help facilitate key corporate objectives.

Roll-out of the software is controlled by each company and its adoption is assisted by on-site training, technical user support on projects, regional user group meetings and targeted application webinars. In addition, tools are provided for companies to develop their own template models, implement multi-site equipment databases and also train in-house experts.

EMSO

Developer: Alsoc Project

On the market: open source

Main Features:

EMSO is the acronym for Environment for Modeling Simuation and Optimization. The ALSOC project develop and maintains specifications of a modeling language suited for the synthesis, simulation, optimization, and process control of general processes. It is entirely written in C++, currently available for Windows and Linux but can be compiled for other platforms if desired. It is an Equation-Oriented simulator and has a large set of built-in functions. Models are written in a modeling language, so the user does not need to be a programmer. It supports static simulation and dynamic simulation. A graphical user interface can be used to model development, simulation execution, and results visualizing. It has the ability to use a system of PlugIns where the user can embed code written in C, C++ or FORTRAN into the models.

The download is possible from this site.

Eq-comp

Developer: Eq-comp

On the market: commercial, services paid per calculation basis

Main Features:

EQ-COMP is a complex chemical engineering process simulation software tool for automatically calculating vapor-liquid equilibrium properties of pure hydrocarbons and binary and multi-component mixtures of hydrocarbons. EQ-COMP is written using software tools like MS Excel and VBA. EQ-COMP can predict vapor-liquid equilibrium properties for hydrocarbon mixtures using Peng-Robinson cubic equation of state. The possible components can include non-polar hydrocarbons, mildly polar hydrocarbons, non-polar inorganic gases or mildly polar inorganic gases. Q-COMP chemical process simulation software can be used for pressure vessel design, distillation column design, natural gas pipeline design and designing of other hydrocarbon handling equipment and can also be used for oil well simulation and in natural gas contract drafting. EQ-COMP can predict phase equilibrium properties of multicomponent hydrocarbon mixtures very accurately. It has been designed to provide correct properties for almost any composition of the 100 + hydrocarbons and 3 inorganic gasses.

gPROMS

Developer: PS Enterprise

On the market: commercial

Main Features:

gPROMS FormulatedProducts is PSE’s new platform for the integrated design and optimization of formulated products and their manufacturing processes. It allows scientists and engineers to screen formulations for end-user attributes, determine whether they can be manufactured efficiently, and explore the design space of the whole formulation and manufacturing chain. gPROMS is an advanced mechanistic process modeling tool, integrating crystallization, solids processing and oral absorption on a single platform. It builds on and strengthens the existing capabilities of gCRYSTAL®, gSOLIDS® and gCOAS® in a systems-based approach that links product performance to process and formulation parameters. Users can screen formulations for end-user attributes, identify risk factors and optimize the entire formulation and manufacturing chain. Application areas include crystallization, solids processing, life sciences, food & dairy and more.

Hydroflo

Developer: Tahoe Design Software

On the market: commercial and academic version for students and educators free of charge

Main Features:

HYDROFLO determines the steady-state flows and pressures and other operating parameters in a single source/single discharge, gravity and pumped flow systems. Pumped systems can be closed loop or open reservoir/tank systems and virtually any incompressible fluid system commonly found in industrial process, water supply, wastewater treatment, fire protection, chemical process, mine de-watering, irrigation and HVAC industries can be modeled.

HYDROFLO’s drag-and-drop workspace gives the designer a vertical space view of the system. Group element data editing makes large scale changes to a design very easy. Instant feedback of analysis results are available simply by hovering over elements. Complete detailed PDF reports of system elements, Hydraulic Grade Line and pump plots are included. NPSHA calculations and NPSHR comparisons are made.

Hysys

Developer: AspenTech

On the market: commercial

Main Features:

Aspen HYSYS, similar to Aspen Plus but dedicated to process simulation of oil, gas and refining processes. It allows using industry-specific unit operation models and powerful tools to optimize operating parameters for feedstock changes. Aspen HYSYS Petroleum Refining now also has a complete suite of rigorous kinetic models to support all major refinery processes. Software allows using the simulation to make better planning and optimization decisions with the support of calibrated models. It also includes tools to easily import and export petroleum assays to and from Aspen PIMS with the new Aspen Assay Management. You can also automate the export of rigorous reactor models to Aspen PIMS (LP software).

HSC Chemistry

Developer: Outotec

On the market: commercial

Main Features:

With the tool is possible to carry out thermodynamic and mineral processing calculations on a standard computer quickly and easily. Essential software toolkit for process research, development, design, and digitalization, as well as for estimating process efficiencies, yields, and environmental footprints.

The modules included in HSC Chemistry have been designed to help solve real problems in industrial processes or to decrease the amount of expensive trial-and-error chemistry at the R&D stage. The software contains 24 modules connected to 12 integrated databases. The modules operate like independent programs, each with its own interface and can be used to create process models for hydrometallurgical and pyrometallurgical systems, as well as for minerals processing and physical recycling systems.

IndissPlus

Developer: RSI

On the market: commercial

Main Features:

IndissPlus, based on First Principles of Chemical Engineering, accurately models process behavior at normal operations or during transient periods, whether the models are part of a dynamic study or incorporated into an Operator Training Simulator (OTS) solution

The application has a rich library of Thermodynamics Packages, Pure Components, and Unit Operation Modules. If 3rd party proprietary components, thermodynamics packages or chemical reactor models are required they can be seamlessly integrated within the IndissPlus platform, by taking advantage of the multi-layer component architecture.

IndissPlus incorporates a Process Diagram Builder to enable users to interactively build their flowsheets using the menus, dropdowns or drag and drop capabilities. Unit Operation detail can easily be specified through each Unit Operation’s Faceplate by filling in the appropriate information in the form of a datasheet.

ITHACA

Developer: Element Process Technology

On the market: commercial

Main Features:

It is a low-cost dynamic process simulator for chemicals, mining & minerals. The features include graphical interface for process flow diagrams, real-time information about the degrees of freedom of the simulation (global) and by equipment (local), integration with Microsoft Excel by means of an add-in, results exporting in clear text, dynamic simulation MSO export (to be used by the EMSO process simulator), updated thermodynamic library with the most recent models tools for oil assay modeling by means of pseudocomponents, specific library for water/steam processes, communication with data logging systems and operating systems by means of OPC.

LIBPF

Developer: LIBPF

On the market: no cost

Main Features:

The LIBPF™ SDK (Software Development Kit) provides the building blocks required to model industrial continuous processes.

Programming with LIBPF is simple for process engineers since all concepts used in process modeling have already been translated into C++ classes: values with units of measurement, components, phases, reactions, material streams, unit operations, multistage unit operations and so on. Furthermore, even the flowsheets created by the model developer are new object types. Defining flowsheets as objects allows one to separate structure and configuration (unique for a given flowsheet type) from the operating conditions, which can differ for each instance. This separation makes model reuse easier and encourages an orderly workflow.

Top 3 Programming Languages for Chemical Engineers

What are best programming tools for solving chemical engineering problems?

SimulateLive.com 15.11.2015.

You can apply programming skills in many areas of Chemical engineering. It can be either at the industrial scale or in research labs and it can include everything from process modeling, analysis, identification, planning, setup, control, maintenance etc.

Depending on your problem you are trying to solve, you can use some predefined functions or you may have to write codes as well. And, even, if you want to work for a company which develops such software tools, you have to get a good hold of programming skills.

Everyone wants to learn how to code, but what is the best entry point? Here are 3 top ways to check « programming » off your skills life-list.

C++

The reason to choose C++ as your first language is purely mercenary. C++ and Java are the two most commonly used languages in enterprise programming projects. If you want to devote your time to learning a single language that has serious enterprise implications, then C++ could be your answer.

You’ll pay for the commercial advantage with a rather steep learning curve, since C++ wasn’t designed as a learning tool. On the other hand, it was designed to allow a programmer to control computer hardware at a very low level. When you learn C++, you have the opportunity to learn precisely how a computer works and how to make it work for your purposes.

With C++ you will need to code everything and it will not be an easy process to develop the skill, but it will certainly be paid off. C++ is the most common programming language for building specialized process simulation software packages such as Hysys, ChemCAD etc.

There are no paid licences needed, so you can start learning C++ even today. Here you can find the Microsoft Visual Studio for C++ to download and install.

MATLAB

If your interest in programming is coupled with needs for numerical analysis, especially for scientific or engineering purposes, then MATLAB could be the first step you need to take in learning to code. MATLAB is a scripting language originally developed by a computer science professor at the University of New Mexico who wanted to save his students the pain of learning Fortran.

Unlike many of the other languages in this article, MATLAB isn’t free. If you only want it for personal use, it’s $149. If you decide you want to use it commercially, the price goes up significantly, to more than $2,000. MATLAB is a powerful solution for those who want to develop applications that visualize data or conduct advanced data analysis. If this sounds like your target app, then MATLAB might be your best learning option.

Matlab trial version can be downloaded at Mathworks website.

Mathematica

Similar to Matlab, Mathematica is one of the most popular programming tools in Chemical engineering. As the name says, its first purpose when it was developed was to solve complex mathematical problems with as little coding as possible. Later it was developed and entered all areas of chemical engineering. Today it has nearly 5,000 built-in functions covering all areas of technical computing—all carefully integrated so they work perfectly together, and all included in the fully integrated Mathematica system.

Mathematica builds in unprecedentedly powerful algorithms across all areas—many of them created at Wolfram using unique development methodologies and the unique capabilities of the Wolfram Language.

With its intuitive English-like function names and coherent design, the Wolfram Language is uniquely easy to read, write, and learn.

Similar to Matlab, the licence has to be paid and it is a bit more expensive than Matlab. For personal use, it will cost you around $300 or $150/year.

More about Mathematica can be found out at the website.

How to Make Process Simulation Work For You

Ivana Lukec, Ph.D. 15.12.2018.

It is a well-known fact that a process simulation is a proven tool and when applied correctly, helps to solve process problems and boosts the quality and efficiency of systems and operations. A successful simulation project is one that delivers useful information or a result at the appropriate time to support a meaningful decision or a task.

However, process simulation can easily become a complex exercise from many points of view and often is impossible to avoid the numerous pitfalls that any simulation project presents. We have chosen some of the tips that will help you recognize and sidestep the worst of these and allow you to concentrate more on obtaining the best results.

In the moments of struggle, whether with the simulation software or with the problem itself, don’t forget:

The simulation study done right brings a great power because it is the most effective and certain way to influence the decision-making process and moreover – make the right decision.

From our standpoint, there are a couple of most critical points to make sure the process simulation will work for you:

- Clearly defined goal: it is important to have a clear focus of what needs to be achieved,

- Clearly defined questions that the simulation needs to give answers to,

- Overall picture of the project and the decisions it will influence.

When talking about process simulation, very often the most attention is put on choosing the right process simulation software and calculation tools, while in fact, the choice of the tool is not the most critical decision for employing a process simulation in problem-solving. It very often has more to do with the understanding of the overall problem and dividing it into smaller pieces. Not even the “perfect” tool can give the answers to these questions. Also, if those questions are not understood correctly, the answers could be misinterpreted and take the whole project into a dead end.

Let’s take a look at some of those imperatives.

Clearly defined goal – defining the objectives

When the decision is made to conduct a simulation project, the first thing to define are the project objectives. This step cannot be highlighted enough because it will prepare and define your path from the step one to the very end of your project and be sure that without understanding the objectives in depth it is impossible to have a successful project. This includes having answers to these questions: Why will you simulate the system and what are your expectations to get out of it? To be more specific, you must determine who your interested parties and superiors are and how do they define the simulation project success and what their expectations are? Which are those questions and decisions the simulation project must give the answers to? What will be the role or purpose of the simulation project?

Understanding the process

Understanding the process you will be describing with the simulation model is a key in helping you define all the goals and objectives. If you are lucky, you will be familiar with the process you are modeling. More typically, you do not know it well enough to accurately model it. Get to know your process and understand it before starting to build the model. While it is not reasonable to expect from a simulation engineer to know every process, an experienced engineer will know which are the important questions and will be able to understand the answers. Find out typical details about a process to be modelled from the book and process description. If possible, talk through a process with an engineer who knows it well.

Tackling the wrong problem

If you pick the wrong problem to explore, you may be setting yourself up for failure before you’ve made your first mouse click.

As giving the answers to what plans to be solved, bring the attention also to what you are not intending to solve. When this is clear from the very beginning, chances are less that you will go astray from your simulation path at the later stages of the project and possibly get lost. Summarize from your initial high-level objectives what you are intending to solve and what you are not intending to solve.

Often is a case that simulation study is looking into scope defined too wide and before you even know it, you get overwhelmed by a long list of unnecessary and too much details. It’s difficult to figure out where the boundaries should be when studying a complex system because it often seems as if everything affects the performance parameters driving the decisions. You have to make sure to avoid falling into this trap and make sure to stay on track with the defined problem.

Timing

A successful simulation project is one that delivers a result at the appropriate time, which makes the time one of the most important variables – so plan well!

Simulation is often a process of discovery. As you model and learn about the system you will find new alternatives to explore and possibly areas of the model requiring more detail. But the best results possible have no value if they are delivered after the decision has been made.

Simulation software selection

For many simulation engineers, the simulation software selection is often considered as a bottleneck, or a most critical point in developing the model. Although the selection of the proper simulation tool is important – it is not more important that all the previous points. There are certain simulation software packages that have the status of the most valuable and they for sure are valuable – but one after the other, all very expensive. This fact tends to be one of the greatest obstacles for not using process simulation more. However, don’t get discouraged by this fact – I assure you there are ways to make a really good simulation work with less expensive or even free simulation tools. Today, there are some very powerful tools available as open source and can be implemented, in worst, case with some restrictions, to solve the majority of process problems.

You can found out more about them at this link.

I assure you that there is no simulation task that cannot be adjusted by definition of objectives and assumptions to meet the requirements of defined goals. So, don’t get discouraged – download one of the mentioned tools and get that simulation going!

Presenting the results

While doing the analysis, have in mind those goals as mentioned at the beginning pf the article. Your primary goal should be to help make the best decision possible given the time and resources allocated. While you might have other personal goals such as to build credibility or make a profit, it is likely those goals will be met if you concentrate on helping your decision-makers.

Although you need to have data to support your conclusions, do not overwhelm your superiors and decision-makers with too many details. Try to provide information in the context needed: simple, informative and concise. Also, try to be as least technical as you can and see the big picture. That often is hard when you are too involved with your problem, but it is very important – so keep it in mind.

With all of these challenges, it’s a wonder that anyone can possibly perform a successful simulation. 🙂 But have these tips in mind and you will substantially boost your likelihood of success.

About the author

Ivana is Ph.D. in chemical engineering and a director of a process consulting company “Model”, specialized for process solutions in areas of mathematical modeling and process simulation, process optimization and design, advanced process control and operator training simulators and has spent all her career dedicated to the field of process simulation and modeling with numerous published scientific and professional papers.

25 Reasons Why Chemical Engineers Should Know and Apply Process Modeling

List of Most Important Applications of Process Modeling

Although we are mostly unaware of this, models are an integral part of any kind of human activity. Discussion about modeling so often goes into the direction of complex mathematical expressions. However, this isn’t always the case, although most models in engineering are qualitative in nature.

Modelling is also an art and a very creative process! It is an important learning process.

We wanted to list most important activities of chemical engineering which are impossible without mathematical modeling. Here is the list:

- process design

- process development

- reduction of manufacturing costs

- production planning and scheduling

- reduction time and costs in all stages of the process life-cycle

- the increase of process efficiency

- calculations of operation benefits

- process troubleshooting

- equipment sizing

- allow a better and deeper understanding of the process and its operation

- support for the solutions adopted during the process

- development and exploitation

- ensure an easy technological transfer of the process

- increase the quality of process management

- reveal abilities to handle complex problems

- improved process monitoring

- predicting product qualities

- continuous process optimization

- contribute to reducing pollution

- improve the safety of the plants

- market new products faster

- reduce waste emission while the process is being developed

- improve the quality of the products

- education of engineers

- ensure a high quality of training of the operators.

Did we forget anything? 🙂

More details about a mathematical modeling as a key discipline of chemical engineering can be found here.

Application of Simulation Through The Life-cycle of a Process

Modeling and Simulation Through Different Phases of a Process

21.11.2017.

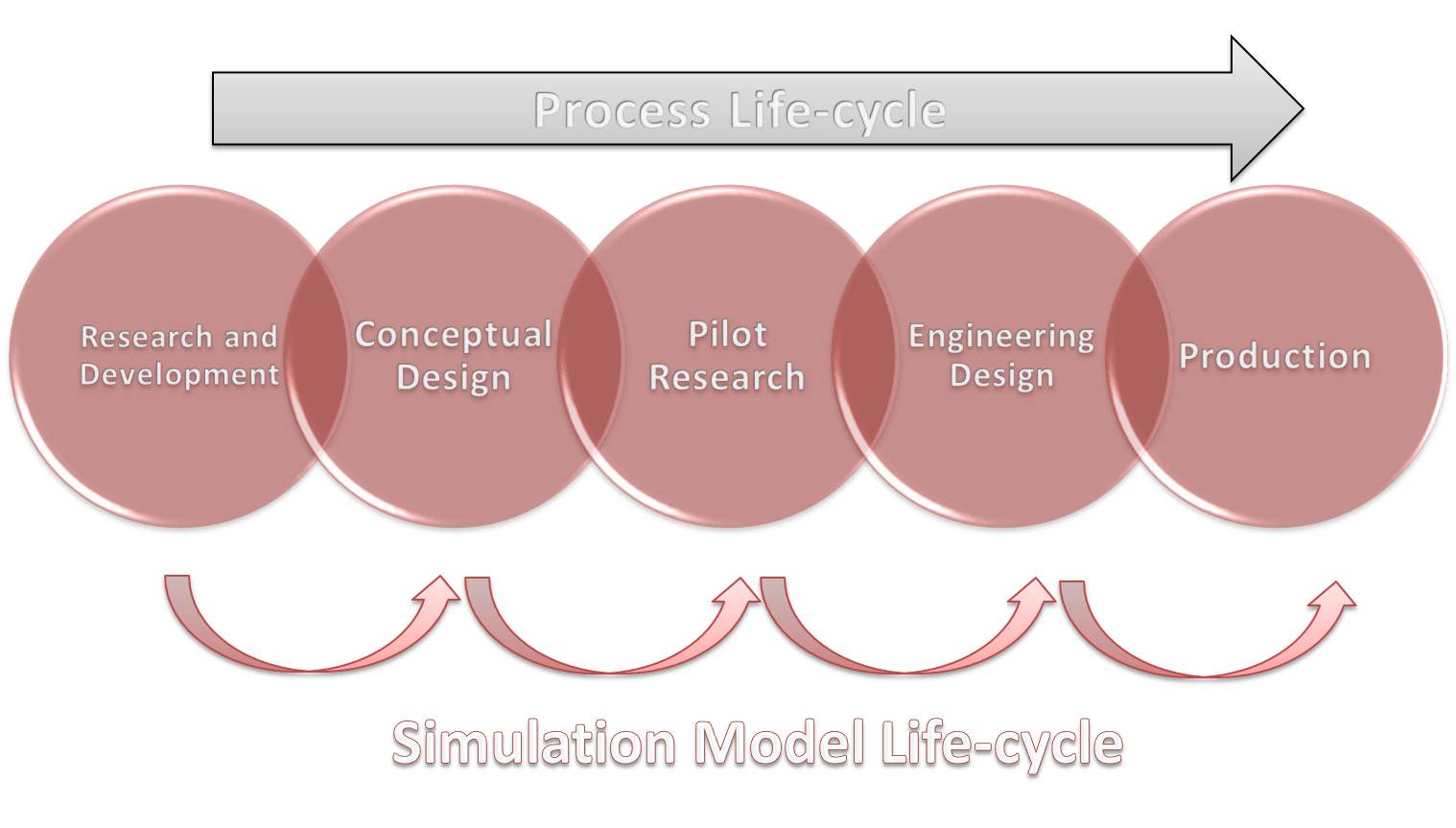

The life-cycle of a chemical compound production or of a chemical process development starts when a new and original idea is advanced taking into account its practical implementation. The former concept with respect to the process lifecycle, which imposed a rigid development from research and development to process operation, has been renewed. It is well known that the most important stages of the life-cycle of a process are:

- research and development,

- conceptual design,

- detailed engineering,

- piloting,

- and operation.

These different steps partially overlap and there is, as well, some feedback between them. For example, plant operation models can be the origin of valuable tips and potential research topics, obviously, these topics directly concern the research and development steps (R&D). The same models, with some changes, are preferably utilized in all the steps. The good transfer of information, knowledge, and ideas is important for successful completion of all the process phases.

The models are an explicit way of describing the knowledge of the process and related phenomena. They provide a systematic approach to the problems in all the stages of the process life-cycle.

In addition, the process of writing the theory as mathematical expressions and codes, reveals the deficiencies with respect to the form and content.

Among the factors that influence the amount of work required to develop a model, we can retain the complexity, the novelty and the particular knowledge related to the process in modeling. Otherwise, commercial modeling software packages are frequently used as an excellent platform.

In the following text, we cover typical simulation models used through the process life-cycle which are shown in the Figure below.

1. Process Modeling Through the Research and Development Stage

The models in the R&D stage can first be simple, and then become more detailed as work proceeds. At this stage, attention has to be focused on the phenomena of phase equilibrium, on the physical properties of the materials, on chemical kinetics as well as on the kinetics of mass and heat transfer.

This action requires careful attention, especially because, at this life-cycle stage, the process could be nothing but an idea.

The work starts with the physical properties, as they act as an input to all other components. The guidelines to choose physical properties, phase equilibrium data, characteristic state equations etc. can be found in the usual literature.

For each studied case, we can choose the level of detail such as the complexity of the equations and the number of parameters. If the literature information on the physical properties is restricted an additional experimental step could be necessary. As far as industrial applications are concerned, the estimation of the reaction kinetics is usually semi-empirical. Therefore, a full and detailed form of kinetics equations is not expected for the majority of the investigated cases. Some physical phenomena along with their effects can require special attention.

The ideal modeling and experimental work have to be realized simultaneously and are strongly related. Models provide a basis to choose, both qualitatively and quantitatively, appropriate experimental conditions. The data obtained from experimental work are used to confirm or reject the theories or the form of equations if an empirical model is being applied. Otherwise, these data are used to estimate the model parameters.

This work is sequential in the sense that starting from an initial guess, the knowledge of the system grows and models get more and more accurate and detailed as the work proceeds.

Based on a good knowledge of the phenomena, valuable tips concerning optimal operating parameters (such as temperature and pressure range as well as restricting phenomena) can be given to the next stages. The degree of detail has to be chosen in order to serve the model usefully.

Practically, the best solution is to describe the most relevant phenomena in a detailed way, whereas the less important ones will be left approximate or in an empirical state.

2. Simulation Models at Conceptual Design Stage

The establishing of the optimal process structure and the best operating conditions characterizes the process development at this stage. Firstly, attention must be focused on the synthesis of the process. The extent to which models can be used in this phase varies. If we have a new process, information from similar cases may not be available at this stage. In the opposite situation, when the chemical components are well known, which usually means that their properties and all related parameters can be found in databanks, the models can be used to quickly check new process ideas. For example, at this stage, for a multiple-component distillation problem, models are used to identify key and non-key components, optimum distillation sequence, the number of ideal stages, the position of feed, etc. At this stage also, we always focus on the full-scale plant. Another question is how the concept will be carried out in the pilot phase. It is known that for this stage, the equipment does not have to be a miniature of the full scale.

The practice has shown that the choices made here affect both investment and operating costs later on. An image of the full-scale plant should also be obtained.

The researchers who work at this level will propose some design computations which are needed by the piloting stage of process life-cycle. Their flow-sheet is the basis of the pilot design or development.

3. Modeling at Pilot Stage

The whole process concept is generally improved in the pilot plant. We can transform this stage into a process analysis made of models if enough experimental data and knowledge about the process exist (for example when we reuse some old processes). For reference, we should mention that other situations are important, such as, for example, knowing that a pilot plant provides relatively easy access to the actual conditions of the process. Some by-pass or small streams could be taken off from the pilot unit and be used in the operation of apparatuses specially designed for the experimental work. Now the models should be ready, except for the correct values of the parameters related to the equipment.

A special pilot stage feature consists in adding the equations describing the non-ideal process hardware to the model in order to compute efficiency (tray efficiency, heat exchanger efficiency, non-ideality numbers, etc). This stage is strongly limited in time, so, to be efficient, researchers must prepare a careful experimental program. It may be impossible to foresee all the details since the experimentation related to the estimation of parameters is often carried out in sequences, but still, a systematic preparation and organization of the work to be done remains useful.

A special pilot stage feature consists in adding the equations describing the non-ideal process hardware to the model in order to compute efficiency (tray efficiency, heat exchanger efficiency, non-ideality numbers, etc). This stage is strongly limited in time, so, to be efficient, researchers must prepare a careful experimental program. It may be impossible to foresee all the details since the experimentation related to the estimation of parameters is often carried out in sequences, but still, a systematic preparation and organization of the work to be done remains useful.

It is important to remember, that the goal of the pilot stage in terms of modeling is to get a valid mass and energy balance model and to validate the home-made models.

4. Modeling at Detailed Engineering Stage

In this stage, models are used for the purpose for which they have been created: the design and development of a full scale plant which is described in the detailed engineering stage.

On the basis of what has been learned before, the equipment can be scaled-up, taking into consideration pilot phase and related data, as well as the concepts of similitude. Special attention should be paid to the detailed engineering of the possible technical solutions. Depending on their nature, the models can either provide a description of how the system behaves in certain conditions or be used to calculate the detailed geometric measures of the equipment.

For example, we show that all the dimensions of a distillation column can be calculated when we definitively establish the separation requirements. Special consideration should be given to the process of scaling-up because here we must appreciate whether the same phenomena occur identically occur on both scales.

It is useful to have detailed documentation concerning all the assumptions and theories used in the model. The yield and energy consumption of a process are easily optimised using fine-tuned models to design a new unit or process. Depending on the process integration, pinch analysis and other similar analysis procedures can be used to find a solution of heat integration. Various data on streams and energy consumption, which are easily developed from simulation results, can be used to sustain the adopted technical solutions.

5. Modeling at Operating Stage

At this stage of the process life-cycle, the models must include all relevant physical, chemical and mechanical aspects that characterize the process. The model predictions are compared to actual plant measurements and are further tuned to improve the accuracy of the predictions.

This consideration is valuable, especially for the finally adjusted models that create the conditions of use to meet the demand of this operating stage so as to guarantee optimal production. Models can also be used in many ways in order to reduce the operating costs. In the mode of parameter estimation, the model is provided with the process measurement data reflecting the current state of the process, which makes it possible, for example, to monitor the fouling of a plant heat exchanger.

In simulation mode, the performance of the process can be followed. Discrepancies between the model and the process may reveal instrumentation malfunction, problems of maintenance etc.

Verified flowsheet models can be used to further analyze the process operation. In the optimising mode, the models are especially used when different grades of the product are manufactured with the process.

The importance of storing process data has been emphasized here. After all, the data are an important link in the creation cycle of the process knowledge.

Future applications concerning the gathering of new data will provide a powerful tool in the use of the stored data or process memory. It is important to keep in mind that, at this stage, the process could be further improved as new ideas, capacity increasing spin-off projects, R&D projects, etc. are developed. These developments frequently require a partial implementation of the methodology described above.

Therefore, the models of the existing process could act as a tool in further developments. In practice, models are often tailor-made and their use requires expertise. Building interfaces, which take into account the special demands arising from man–computer interaction, can greatly expand the use of the models.

Common Pitfalls of Modeling and Simulation

Discussing Most Commonly Seen Simulation Challenges

24.10.2017.

There are many potential pitfalls that face those who embark on a process simulation development effort. This article discusses some of those most commonly seen.

1. Model only what you understand

It can be said that the utility of a given model is only as good as the degree to which it represents the actual system being modeled. Indeed, a system — whether a process unit or just a section — can only be modeled once it is sufficiently understood. One may ask why modeling and simulation designers develop invalid models? There are many reasons, the first of which is that high fidelity model development requires a significant investment of time and effort. The fact is that many designers are under time constraints to deliver results. Consequently, a careful understanding of the underlying system being modeled and rigorous validation of the model is not always an option.

While understandable, this is at the same time unacceptable. It is highly unlikely that a simulation developer can provide a meaningful result when they did not understand the system they were intending to model. While the timeline might have been met, the result was likely meaningless. Worse yet, the result was likely wrong and might have adversely affected larger design or business decisions. Model only what you understand!

If you don’t have a fundamental understanding of a technology, there is no way you can effectively model or simulate that technology.

This step cannot be skipped in a successful modeling and simulation effort. If this step cannot be completed, it is better to not proceed down the path of modeling and simulation development.

2. Understand your model

It is imperative that the simulation engineer has a full understanding of the tools being used. Most simulations are likely to have errors — even commercial tools. This is especially the case for new simulation implementations. Sometimes, simulation implementations can make assumptions that may not accurately reflect the exact process performance. So, one must be careful in defining basic simulation assumptions.

If the simulation developer utilizes commercial simulation tools for the implementations, it is imperative to allocate the proper amount of time to closely examine and fully understand what that code is doing and what it is not doing.

There is no better way to lose credibility than to not be able to answer questions about one’s own results.

Understand what you have modeled! There are resources available to help with this, including technical support for commercial tools, online groups and user forums for open source tools.

3. Make your results independently repeatable

The first rule of thumb is having the answer to this question: is my model performing as I expected it to perform?

If the answer is « Yes », then new simulation results can also be compared with results in existing literature using underlying assumptions and parameter conditions. Good checking method is as well using a different simulation tool with same process data and assumptions. Getting results that are very close to each other is definitely good confirmation of your model and confirmation that the model results are independently repeatable!

4. Carefully define modeling and simulation requirements

This is an activity that is too often ignored or given superficial treatment. The authors would argue that simulation engineers all too often rush into a modeling and simulation effort without a clear idea of what they are hoping to accomplish. This is a surefire recipe for failure.

The first step is to clearly understand the results of interest that would be generated by a simulation. Not all simulation tools necessarily lend themselves to the same types of output results, so it is important to clearly define expectations so that tool selection is an informed

process.

The next step is to clearly define the required performance of the simulation to be developed. We will focus on three primary dimensions of performance:

- Cost: The overall investment in resources towards the development and maintenance of the modeling and simulation activity. This includes not only original platform costs, but also development time, upgrade and maintenance costs, and troubleshooting.

- Execution Speed: For a given simulation scenario, how quickly can that simulation complete and provide the desired output results? This is generally governed by software complexity.

- Fidelity: For a given simulation task, how accurately do the simulation’s results reflect the performance of the real system.

Note that these dimensions of performance are often contradictory; not all performance dimensions can be achieved simultaneously. Do you want high fidelity? Then the cost will likely be very high. In general, you should prioritize those three dimensions of performance.

A common pitfall is to begin a modeling and simulation effort with unrealistic expectations. Is it really feasible to model all the process components to every little process detail with high fidelity? Probably not. Is it possible to model the entire process to every little detail with many simplifying assumptions? Probably, but it is unlikely to be useful.

When defining requirements and expectations for a modeling and simulation effort it is recommended to begin by choosing the required fidelity. How accurate result is required? A successful effort will always begin with this question because, without a meaningful degree of fidelity, any model and simulation activity is meaningless.

Once the required fidelity is established, one can then begin placing limitations on simulation capabilities accordingly.

Cost is generally bound by an allocation of resources. So given a known cost constraint and a known fidelity requirement, we can then begin building a conceptual model for the simulation. The target fidelity will mandate the inclusion of particular system characteristics with great detail and inputs with particular degrees of accuracy, and also allow for relaxation on other system details and input accuracy.

Note that this exercise requires a strong understanding of the system being modeled and on the underlying concepts.

Remember, model only what you understand!

5. Model what you need and no more

One of the first decisions that the simulation developer must face is to determine what he or she is attempting to demonstrate through simulation and what is the most simplistic model that captures all necessary components. The engineering tradeoff is that increased detail can provide higher fidelity output from the model, but at the cost of complexity — potentially introducing error and certainly increasing debugging time and execution time.

The designer must also realize that a model is always an abstraction from the real world.

Regardless of the level of detail included, a simulation will always be an approximation of the real system; an arbitrarily high degree of fidelity is generally not possible. Also, the cost of increased fidelity at some point becomes greater than the marginal utility of the additional fidelity.

How much detail is sufficient in a simulation to capture the essence of the real world process being modeled? Unfortunately, the answer to this question is that it depends on the particular simulation scenario. The simulation engineer should first decide exactly what is the problem that he or she seeks to address through simulation. What are the inputs and the outputs of the model? Some outputs may be independent of specific details in the model, while others may be correlated and therefore seriously affected if those components are abstracted.

Simulation always takes the form of an abstraction of a system to allow the designer to gain some insight from investigating various operating scenarios of the system. Yet in other cases, the researcher desires to investigate a process reaction to a single condition that may be unlikely to occur in real life. Perhaps testing the actual system under this condition could be harmful and simulation is the only way to examine the problem. The next step is to decide how much of the system must be implemented for the simulation results to be valid. Ultimately, the simulation engineer is going to have to decide the level of detail required in his or her simulation.

Simulation always takes the form of an abstraction of a system to allow the designer to gain some insight from investigating various operating scenarios of the system. Yet in other cases, the researcher desires to investigate a process reaction to a single condition that may be unlikely to occur in real life. Perhaps testing the actual system under this condition could be harmful and simulation is the only way to examine the problem. The next step is to decide how much of the system must be implemented for the simulation results to be valid. Ultimately, the simulation engineer is going to have to decide the level of detail required in his or her simulation.

First, the developer must consider the engineering tradeoffs between adding more detail to a model and increased computational time, increased complexity, and increased debugging time.

A more abstract approach that focuses only on the basic behavior of a process is generally very flexible, easier to debug, and has a shorter execution time. But, it may not capture the behavior of interest.

Some Observations on the Practical Use of Modeling and Simulation

Trends in Application of Mathematical Modeling and Simulation in Industry, Research and Innovation

12.10.2017.

The advances in basic knowledge and model-based process engineering methodologies are resulting with an increasing demand for models. The observations given here are commentaries and considerations about some aspects of modeling with the focus on:

- reliability of models and simulations,

- role of the industry as final user of modeling and simulation research,

- role of modeling and simulation in innovations,

- role of modeling in technology transfer and knowledge management,

- role of the universities in modeling and simulation development.

Reliability of Models and Simulations

Correctness, reliability and applicability of models are very important. For most engineering purposes, the models must have a broad range of applicability and they must be validated. If the models are not based on these principles, their range of applicability is usually very narrow, and they cannot be extrapolated. In many modeling and simulation applications in the process industry, kinetic data and thermodynamic property methods are the most likely sources of error. Errors often occur when and because the models are used outside the scope of their applicability. With the advent and availability of cheap computer power, process modeling has increased in sophistication, and has, at the same time, come within the reach of people who previously were deterred by complex mathematics and computer programming.

Simulators are usually made of a huge number of models, and the user has to choose the right ones for the desired purpose. Making correct calculations is not usually trivial and requires a certain amount of expertise, training, process engineering background and knowledge of sometimes very complex phenomena.

The problem with commercial simulators is that, since the simulations can be carried out fairly easily, choosing the wrong models can also be quite easy. Choosing a bad model can result in totally incorrect results. Moreover, with commercial simulators, there is no access to the source code and the user cannot be sure that the calculations are made correctly. The existing commercial flowsheeting packages are very comprehensive and efficient, but the possibility of misuse and misinterpretation of simulation results is high. In CFD and molecular modeling,

the results are often only qualitative. The methods can still be useful, since the results are applied to pre-screen the possible experiments, the synthesis routes and to visualize a particular phenomenon.

The Role of Industry as Final User of Modelling and Simulation

This role is not clear, except in the cases of big companies which have their own research and development divisions. In this case, the R&D company division has specialized teams for modeling and simulation implementation. The properly developed models and simulators are then frequently used, as we have already shown, during the life-cycle of all the particular processes or fabrications that give the company its profile. At the same time, each big company’s R&D division can be an important vendor of professional software.

The small companies that are highly specialized in modeling and simulation, operate as independent software creators and vendors for one or more company’s R&D division. The use of modeling and simulation in small and medium size manufacturing companies is quite limited. Since small manufacturing companies and university researchers do not cooperate much, awareness and knowledge about modern Computer Aided Process Engineering tools are also limited. There are of course exceptions among manufacturing companies. Some small and medium size engineering and consulting companies are active users of modeling and simulation tools, which allows them to better justify the solutions they propose to their clients.

The small companies that are highly specialized in modeling and simulation, operate as independent software creators and vendors for one or more company’s R&D division. The use of modeling and simulation in small and medium size manufacturing companies is quite limited. Since small manufacturing companies and university researchers do not cooperate much, awareness and knowledge about modern Computer Aided Process Engineering tools are also limited. There are of course exceptions among manufacturing companies. Some small and medium size engineering and consulting companies are active users of modeling and simulation tools, which allows them to better justify the solutions they propose to their clients.

Modeling and Simulation in Innovations

Modeling and simulation are usually regarded as support tools in innovative work. They allow fast and easy testing of innovations.

The use of simulators also builds a good basis for understanding complex phenomena and their interactions.

In addition, it also builds a good basis for innovative thinking. It is indeed quite important to understand what the simulators really do and what the limitations of the models are. As a consequence, access to source codes is the key to the innovative use of models and simulators. Many commercial programs are usually stuck in old thinking and well-established models, and then, the in-house-made simulators are quite often better innovative tools.

Molecular modeling can be used, for example, in screening potential drug molecules or synthesis methods in order to reduce their number. The existing molecular modeling technology is already so good that there are real benefits in using it. Molecular modeling can be a very efficient and invaluable innovative tool for the industry. The terms “artificial intelligence” and “expert systems” are based on existing knowledge. The computers are not creative, which means that these tools cannot be innovative. However, they can be used as tools in innovative development work. While most of the modeling and simulation methods are just tools, in innovative work, process synthesis can be regarded as an innovation generator, i.e. it can find novel solutions by itself.

Molecular modeling can be used, for example, in screening potential drug molecules or synthesis methods in order to reduce their number. The existing molecular modeling technology is already so good that there are real benefits in using it. Molecular modeling can be a very efficient and invaluable innovative tool for the industry. The terms “artificial intelligence” and “expert systems” are based on existing knowledge. The computers are not creative, which means that these tools cannot be innovative. However, they can be used as tools in innovative development work. While most of the modeling and simulation methods are just tools, in innovative work, process synthesis can be regarded as an innovation generator, i.e. it can find novel solutions by itself.

Role of Modelling in Technology Transfer and Knowledge Management

Models are not only made for specific problem solving. They are also important as databases and knowledge management or technology transfer tools. For example, an in-house-made flowsheet simulator is typically a huge set of models containing the most important unit operation models, reactor models, physical property models, thermodynamics models and solver models from the literature as well as the models developed in the company over the years or even decades. Ideally, an inhouse-made simulator is a well-organized and well-documented historical database of models and data. A model is also a technology transfer tool through process development and process life cycle. The problem is that the models developed in earlier stages are no longer used in manufacturing. The people in charge of control write simple models for control purposes and the useful models from earlier stages are simply forgotten. Ideally, the models developed in earlier stages should be used and evaluated in manufacturing, and they should provide information to the research stage conceptual design stage and detailed design stage. One reason for “forgetting” the model during the process life cycle is that the simulators are not integrated. Different tools are used in each process life cycle stage. However, simulators with integrated steady-state simulation, dynamic simulation and control and operator-training tools are already being developed.

The problem is that the manufacturing people are not always willing to use the models, even though the advantages are clear and the models are made very easy to use.

Role of the Universities in Modelling and Simulation Development

The importance of modeling and simulation for industrial use is generally promoted, in each factory, by the youngest engineers. The importance of computer-aided tools to the factory level is best understood when the application of modeling and simulation has a history. The importance of modeling and simulation is not understood so well in the sectors that do not use computer-aided tools.

Technical universities have a key role in the education of engineers as well as in research and development. In fact, the universities’ education role is absolutely fundamental for the future development of the industry.

Indeed, in the future, the work of a process engineer will be more and more concerned with modeling and computation. Moreover, the work will be all the more demanding so that process engineers will need to have an enormous amount of knowledge not only of physics and chemistry, but also of numerical computation, modeling and programming.

Reference: T.G.Dobre, J.S.Marcano: Chemical Engineering: Modeling, Simulation and Similitude

Process simulation as the key discipline of chemical engineering

Application of process simulation in disciplines of chemical processing

19.08.2017.

Chemical engineering can be defined from many different aspects. However, all the scientists and professionals agree that the process is the center of it. To make a distinction from any other discipline, the role of chemical engineering could be defined with its purpose to develop, design, construct, control, optimize and manage any process involving physical and/or chemical changes and make this process profitable without violating environmental balance.