Process safety focuses on preventing fires, explosions and accidental chemical releases in chemical process facilities or other facilities dealing with hazardous materials such as refineries, and oil and gas (onshore and offshore) production installations.

Occupational safety and health primarily covers the management of personal safety. Well developed management systems also address process safety issues. The tools, techniques, programs etc. required to manage both process and occupational safety can sometimes be the same (for example a work permit system) and in other cases may have very different approaches. LOPA (Layers of Protection Analysis) or QRA (Quantified Risk Assessment) for example focus on process safety whereas PPE (Personal Protective Equipment) is very much an individual focused occupational safety issue.

A common tool used to explain the various different but connected systems related to achieving process safety is described by James T. Reason‘s Swiss cheese model. In this model, barriers that prevent, detect, control and mitigate a major accident are depicted as slices, each having a number of holes. The holes represent imperfections in the barrier, which can be defined as specific performance standards. The better managed the barrier, the smaller these holes will be. When a major accident happens, this is invariably because all the imperfections in the barriers (the holes) have lined up. It is the multiplicity of barriers that provide the protection.

Process safety generally refers to the prevention of unintentional releases of chemicals, energy, or other potentially dangerous materials (including steam) during the course of chemical processes that can have a serious effect to the plant and environment. Process safety involves, for example, the prevention of leaks, spills, equipment malfunction, over-pressures, over-temperatures, corrosion, metal fatigue and other similar conditions. Process safety programs focus on design and engineering of facilities, maintenance of equipment, effective alarms, effective control points, procedures and training. It is sometimes useful to consider process safety as the outcome or result of a wide range of technical, management and operational disciplines coming together in an organised way. Process safety chemists will examine both:

- Desired chemical reaction, using a reaction calorimeter: this will allow a good measure of not only the reaction heat to be ascertained, but also to examine how much heat is « accumulated » during the various additions of chemicals (i.e. How much heat could be evolved if anything went wrong. The chemist will then (if necessary) vary the reactions conditions to arrive at a process that the proposed plant can control (i.e. the heat output is significantly less than the cooling capacity of the plant), and has low accumulation (meaning that in the event of any problem, the current addition can be stopped without any danger of overheating)

- Undesired chemical reaction, using one or more of:

These instruments are typically used for examining crude materials that are intended to be purified by distillation – these results will allow the chemist to decide on a maximum temperature limit for a process, that will not give rise to a thermal runaway.

Dangerous goods

Dangerous goods, abbreviated DG, are substances that when transported are a risk to health, safety, property or the environment. Certain dangerous goods that pose risks even when not being transported are known as hazardous materials (abbreviated as HAZMAT or hazmat).

Hazardous materials are often subject to chemical regulations. Hazmat teams are personnel specially trained to handle dangerous goods, which include materials that are radioactive, flammable, explosive, corrosive, oxidizing, asphyxiating, biohazardous, toxic, pathogenic, or allergenic. Also included are physical conditions such as compressed gases and liquids or hot materials, including all goods containing such materials or chemicals, or may have other characteristics that render them hazardous in specific circumstances.

In the United States, dangerous goods are often indicated by diamond-shaped signage on the item (see NFPA 704), its container, or the building where it is stored. The color of each diamond indicates its hazard, e.g., flammable is indicated with red, because fire and heat are generally of red color, and explosive is indicated with orange, because mixing red (flammable) with yellow (oxidizing agent) creates orange. A nonflammable and nontoxic gas is indicated with green, because all compressed air vessels are this color in France after World War II, and France was where the diamond system of hazmat identification originated.

Handling

Mitigating the risks associated with hazardous materials may require the application of safety precautions during their transport, use, storage and disposal. Most countries regulate hazardous materials by law, and they are subject to several international treaties as well. Even so, different countries may use different class diamonds for the same product. For example, in Australia, anhydrous ammonia UN 1005 is classified as 2.3 (toxic gas) with subsidiary hazard 8 (corrosive), whereas in the U.S. it is only classified as 2.2 (non-flammable gas).

People who handle dangerous goods will often wear protective equipment, and metropolitan fire departments often have a response team specifically trained to deal with accidents and spills. Persons who may come into contact with dangerous goods as part of their work are also often subject to monitoring or health surveillance to ensure that their exposure does not exceed occupational exposure limits.

Laws and regulations on the use and handling of hazardous materials may differ depending on the activity and status of the material. For example, one set of requirements may apply to their use in the workplace while a different set of requirements may apply to spill response, sale for consumer use, or transportation. Most countries regulate some aspect of hazardous materials.

Mitigating the risks associated with hazardous materials may require the application of safety precautions during their transport, use, storage and disposal. Most countries regulate hazardous materials by law, and they are subject to several international treaties as well. Even so, different countries may use different class diamonds for the same product. For example, in Australia, anhydrous ammonia UN 1005 is classified as 2.3 (toxic gas) with subsidiary hazard 8 (corrosive), whereas in the U.S. it is only classified as 2.2 (non-flammable gas).

People who handle dangerous goods will often wear protective equipment, and metropolitan fire departments often have a response team specifically trained to deal with accidents and spills. Persons who may come into contact with dangerous goods as part of their work are also often subject to monitoring or health surveillance to ensure that their exposure does not exceed occupational exposure limits.

Laws and regulations on the use and handling of hazardous materials may differ depending on the activity and status of the material. For example, one set of requirements may apply to their use in the workplace while a different set of requirements may apply to spill response, sale for consumer use, or transportation. Most countries regulate some aspect of hazardous materials.

Global regulations

The most widely applied regulatory scheme is that for the transportation of dangerous goods. The United Nations Economic and Social Council issues the UN Recommendations on the Transport of Dangerous Goods, which form the basis for most regional, national, and international regulatory schemes. For instance, the International Civil Aviation Organization has developed dangerous goods regulations for air transport of hazardous materials that are based upon the UN model but modified to accommodate unique aspects of air transport. Individual airline and governmental requirements are incorporated with this by the International Air Transport Association to produce the widely used IATA Dangerous Goods Regulations (DGR). Similarly, the International Maritime Organization (IMO) has developed the International Maritime Dangerous Goods Code (« IMDG Code », part of the International Convention for the Safety of Life at Sea) for transportation of dangerous goods by sea. IMO member countries have also developed the HNS Convention to provide compensation in case of dangerous goods spills in the sea.

The Intergovernmental Organisation for International Carriage by Rail has developed the regulations concerning the International Carriage of Dangerous Goods by Rail (« RID », part of the Convention concerning International Carriage by Rail). Many individual nations have also structured their dangerous goods transportation regulations to harmonize with the UN model in organization as well as in specific requirements.

The Globally Harmonized System of Classification and Labelling of Chemicals (GHS) is an internationally agreed upon system set to replace the various classification and labeling standards used in different countries. The GHS uses consistent criteria for classification and labeling on a global level.

Classification and labeling summary tables

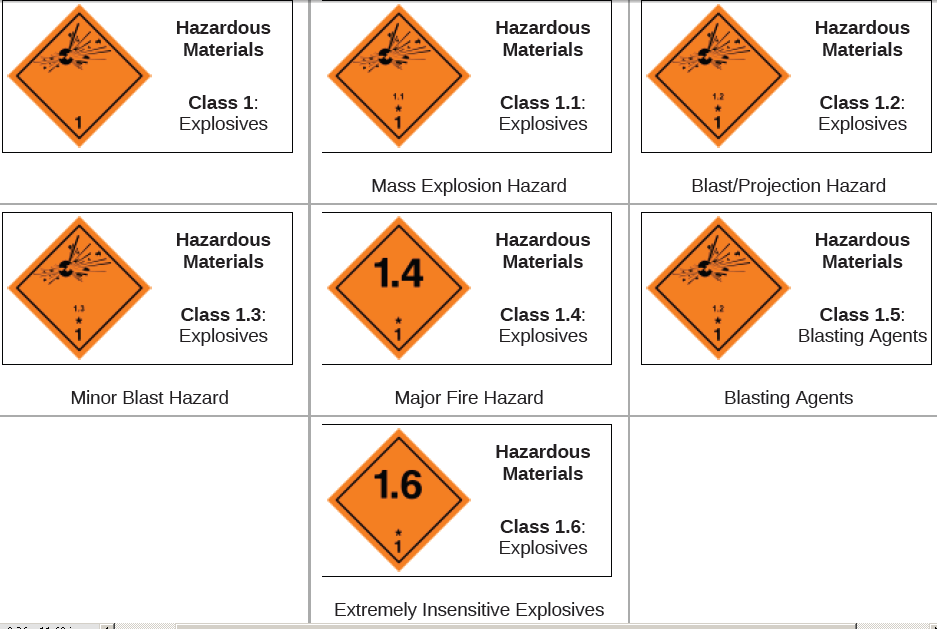

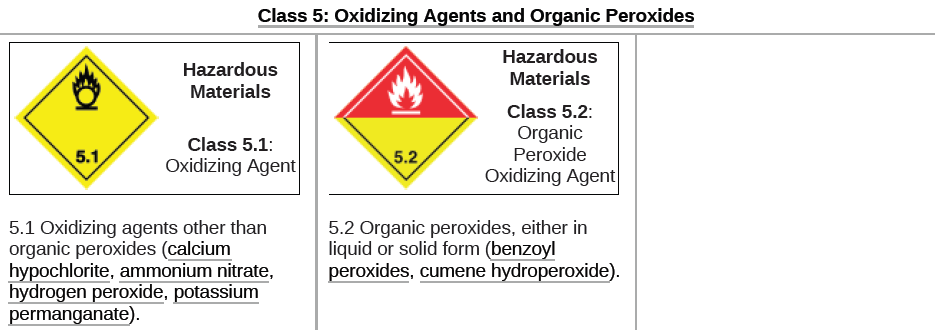

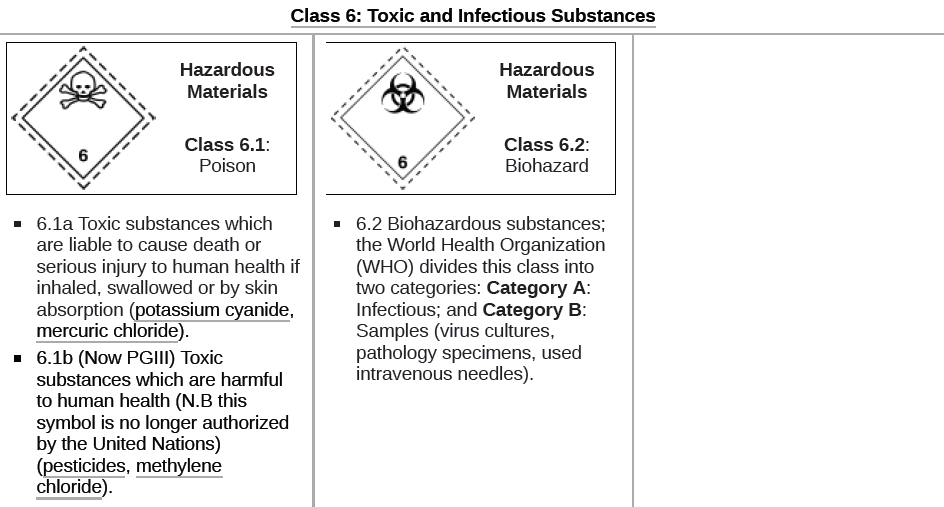

Dangerous goods are divided into nine classes (in addition to several subcategories) on the basis of the specific chemical characteristics producing the risk.

Note: The graphics and text in this article representing the dangerous goods safety marks are derived from the United Nations-based system of identifying dangerous goods. Not all countries use precisely the same graphics (label, placard or text information) in their national regulations. Some use graphic symbols, but without English wording or with similar wording in their national language. Refer to the dangerous goods transportation regulations of the country of interest.

For example, see the TDG Bulletin: Dangerous Goods Safety Marks based on the Canadian Transportation of Dangerous Goods Regulations.

The statement above applies equally to all the dangerous goods classes discussed in this article.

| Class 1: Explosives |

|---|

| Information on this graphic changes depending on which, « Division » of explosive is shipped. Explosive Dangerous Goods have compatibility group letters assigned to facilitate segregation during transport. The letters used range from A to S excluding the letters I, M, O, P, Q and R. The example above shows an explosive with a compatibility group « A » (shown as 1.1A). The actual letter shown would depend on the specific properties of the substance being transported.For example, the Canadian Transportation of Dangerous Goods Regulations provides a description of compatibility groups.1.1 Explosives with a mass explosion hazardEx: TNT, dynamite, nitroglycerine.1.2 Explosives with a severe projection hazard.1.3 Explosives with a fire, blast or projection hazard but not a mass explosion hazard.1.4 Minor fire or projection hazard (includes ammunition and most consumer fireworks).1.5 An insensitive substance with a mass explosion hazard (explosion similar to 1.1)1.6 Extremely insensitive articles.The United States Department of Transportation (DOT) regulates hazmat transportation within the territory of the US.1.1 — Explosives with a mass explosion hazard. (nitroglycerin/dynamite, ANFO)1.2 — Explosives with a blast/projection hazard.1.3 — Explosives with a minor blast hazard. (rocket propellant, display fireworks)1.4 — Explosives with a major fire hazard. (consumer fireworks, ammunition)1.5 — Blasting agents.1.6 — Extremely insensitive explosives. |

| Class 2: Gases |

|---|

| Gases which are compressed, liquefied or dissolved under pressure as detailed below. Some gases have subsidiary risk classes; poisonous or corrosive.2.1 Flammable Gas: Gases which ignite on contact with an ignition source, such as acetylene, hydrogen, and propane.2.2 Non-Flammable Gases: Gases which are neither flammable nor poisonous. Includes the cryogenic gases/liquids (temperatures of below -100 °C) used for cryopreservation and rocket fuels, such as nitrogen, neon, and carbon dioxide.2.3 Poisonous Gases: Gases liable to cause death or serious injury to human health if inhaled; examples are fluorine, chlorine, and hydrogen cyanide. |

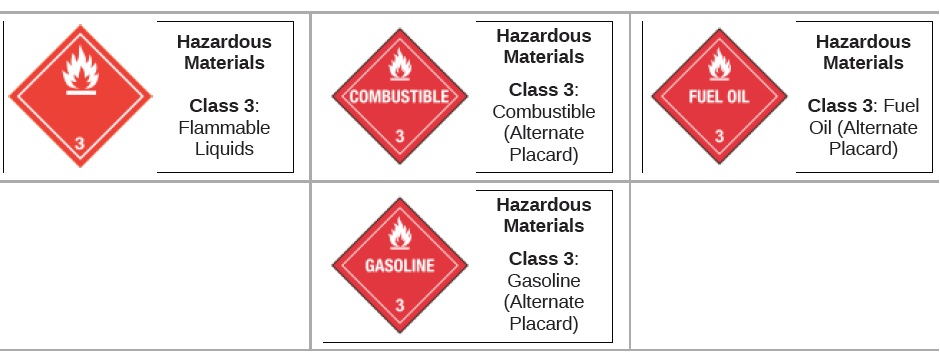

| Class 3: Flammable Liquids |

|---|

| Flammable liquids included in Class 3 are included in one of the following packing groups: Packing Group I, if they have an initial boiling point of 35°C or less at an absolute pressure of 101.3 kPa and any flash point, such as diethyl ether or carbon disulfide; Packing Group II, if they have an initial boiling point greater than 35°C at an absolute pressure of 101.3 kPa and a flash point less than 23°C, such as gasoline (petrol) and acetone; or Packing Group III, if the criteria for inclusion in Packing Group I or II are not met, such as kerosene and diesel. Note: For further details, check the Dangerous Goods Transportation Regulations of the country of interest. |

Packing groups

Packing groups are used for the purpose of determining the degree of protective packaging required for dangerous goods during transportation.

- Group I: great danger, and most protective packaging required. Some combinations of different classes of dangerous goods on the same vehicle or in the same container are forbidden if one of the goods is Group I.

- Group II: medium danger

- Group III: minor danger among regulated goods, and least protective packaging within the transportation requirement

Transport documents

One of the transport regulations is that, as an assistance during emergency situations, written instructions how to deal in such need to be carried and easily accessible in the driver’s cabin.

A license or permit card for hazmat training must be presented when requested by officials.

Dangerous goods shipments also require a special declaration form prepared by the shipper. Among the information that is generally required includes the shipper’s name and address; the consignee’s name and address; descriptions of each of the dangerous goods, along with their quantity, classification, and packaging; and emergency contact information. Common formats include the one issued by the International Air Transport Association (IATA) for air shipments and the form by the International Maritime Organization (IMO) for sea cargo.

Occupational safety and health

Occupational safety and health (OSH), also commonly referred to as occupational health and safety (OHS), occupational health, or workplace health and safety (WHS), is a multidisciplinary field concerned with the safety, health, and welfare of people at work. These terms also refer to the goals of this field, so their use in the sense of this article was originally an abbreviation of occupational safety and health program/department etc.

The goal of occupational safety and health programs is to foster a safe and healthy work environment. OSH may also protect co-workers, family members, employers, customers, and many others who might be affected by the workplace environment. In the United States, the term occupational health and safety is referred to as occupational health and occupational and non-occupational safety and includes safety for activities outside of work.

In common-law jurisdictions, employers have a common law duty to take reasonable care of the safety of their employees. Statute law may in addition impose other general duties, introduce specific duties, and create government bodies with powers to regulate workplace safety issues: details of this vary from jurisdiction to jurisdiction.

Definition

As defined by the World Health Organization (WHO) « occupational health deals with all aspects of health and safety in the workplace and has a strong focus on primary prevention of hazards. » Health has been defined as « a state of complete physical, mental and social well-being and not merely the absence of disease or infirmity. » Occupational health is a multidisciplinary field of healthcare concerned with enabling an individual to undertake their occupation, in the way that causes least harm to their health. It contrasts, for example, with the promotion of health and safety at work, which is concerned with preventing harm from any incidental hazards, arising in the workplace.

Since 1950, the International Labour Organization (ILO) and the World Health Organization (WHO) have shared a common definition of occupational health. It was adopted by the Joint ILO/WHO Committee on Occupational Health at its first session in 1950 and revised at its twelfth session in 1995. The definition reads:

The main focus in occupational health is on three different objectives: (i) the maintenance and promotion of workers’ health and working capacity; (ii) the improvement of working environment and work to become conducive to safety and health and (iii) development of work organizations and working cultures in a direction which supports health and safety at work and in doing so also promotes a positive social climate and smooth operation and may enhance productivity of the undertakings. The concept of working culture is intended in this context to mean a reflection of the essential value systems adopted by the undertaking concerned. Such a culture is reflected in practice in the managerial systems, personnel policy, principles for participation, training policies and quality management of the undertaking.— Joint ILO/WHO Committee on Occupational Health

Those in the field of occupational health come from a wide range of disciplines and professions including medicine, psychology, epidemiology, physiotherapy and rehabilitation, occupational therapy, occupational medicine, human factors and ergonomics, and many others. Professionals advise on a broad range of occupational health matters. These include how to avoid particular pre-existing conditions causing a problem in the occupation, correct posture for the work, frequency of rest breaks, preventive action that can be undertaken, and so forth.

« Occupational health should aim at: the promotion and maintenance of the highest degree of physical, mental and social well-being of workers in all occupations; the prevention amongst workers of departures from health caused by their working conditions; the protection of workers in their employment from risks resulting from factors adverse to health; the placing and maintenance of the worker in an occupational environment adapted to his physiological and psychological capabilities; and, to summarize, the adaptation of work to man and of each man to his job.

History

The research and regulation of occupational safety and health are a relatively recent phenomenon. As labor movements arose in response to worker concerns in the wake of the industrial revolution, worker’s health entered consideration as a labor-related issue.

In the United Kingdom, the Factory Acts of the early nineteenth century (from 1802 onwards) arose out of concerns about the poor health of children working in cotton mills: the Act of 1833 created a dedicated professional Factory Inspectorate. The initial remit of the Inspectorate was to police restrictions on the working hours in the textile industry of children and young persons (introduced to prevent chronic overwork, identified as leading directly to ill-health and deformation, and indirectly to a high accident rate). However, on the urging of the Factory Inspectorate, a further Act in 1844 giving similar restrictions on working hours for women in the textile industry introduced a requirement for machinery guarding (but only in the textile industry, and only in areas that might be accessed by women or children).

In 1840 a Royal Commission published its findings on the state of conditions for the workers of the mining industry that documented the appallingly dangerous environment that they had to work in and the high frequency of accidents. The commission sparked public outrage which resulted in the Mines Act of 1842. The act set up an inspectorate for mines and collieries which resulted in many prosecutions and safety improvements, and by 1850, inspectors were able to enter and inspect premises at their discretion.

Otto von Bismarck inaugurated the first social insurance legislation in 1883 and the first worker’s compensation law in 1884 – the first of their kind in the Western world. Similar acts followed in other countries, partly in response to labor unrest.

Workplace hazards

Although work provides many economic and other benefits, a wide array of workplace hazards also present risks to the health and safety of people at work. These include but are not limited to, « chemicals, biological agents, physical factors, adverse ergonomic conditions, allergens, a complex network of safety risks, » and a broad range of psychosocial risk factors. Personal protective equipment can help protect against many of these hazards.

Physical hazards affect many people in the workplace. Occupational hearing loss is the most common work-related injury in the United States, with 22 million workers exposed to hazardous noise levels at work and an estimated $242 million spent annually on worker’s compensation for hearing loss disability. Falls are also a common cause of occupational injuries and fatalities, especially in construction, extraction, transportation, healthcare, and building cleaning and maintenance. Machines have moving parts, sharp edges, hot surfaces and other hazards with the potential to crush, burn, cut, shear, stab or otherwise strike or wound workers if used unsafely.

Biological hazards (biohazards) include infectious microorganisms such as viruses and toxins produced by those organisms such as anthrax. Biohazards affect workers in many industries; influenza, for example, affects a broad population of workers. Outdoor workers, including farmers, landscapers, and construction workers, risk exposure to numerous biohazards, including animal bites and stings, urushiol from poisonous plants, and diseases transmitted through animals such as the West Nile virus and Lyme disease. Health care workers, including veterinary health workers, risk exposure to blood-borne pathogens and various infectious diseases, especially those that are emerging.

Dangerous chemicals can pose a chemical hazard in the workplace. There are many classifications of hazardous chemicals, including neurotoxins, immune agents, dermatologic agents, carcinogens, reproductive toxins, systemic toxins, asthmagens, pneumoconiotic agents, and sensitizers. Authorities such as regulatory agencies set occupational exposure limits to mitigate the risk of chemical hazards. An international effort is investigating the health effects of mixtures of chemicals. There is some evidence that certain chemicals are harmful at lower levels when mixed with one or more other chemicals. This may be particularly important in causing cancer.

Psychosocial hazards include risks to the mental and emotional well-being of workers, such as feelings of job insecurity, long work hours, and poor work-life balance. A recent Cochrane review – using moderate quality evidence – related that the addition of work-directed interventions for depressed workers receiving clinical interventions reduces the number of lost work days as compared to clinical interventions alone. This review also demonstrated that the addition of cognitive behavioral therapy to primary or occupational care and the addition of a « structured telephone outreach and care management program » to usual care are both effective at reducing sick leave days.

By industry

Specific occupational safety and health risk factors vary depending on the specific sector and industry. Construction workers might be particularly at risk of falls, for instance, whereas fishermen might be particularly at risk of drowning. The United States Bureau of Labor Statistics identifies the fishing, aviation, lumber, metalworking, agriculture, mining and transportation industries as among some of the more dangerous for workers. Similarly psychosocial risks such as workplace violence are more pronounced for certain occupational groups such as health care employees, police, correctional officers and teachers.

Construction

Construction is one of the most dangerous occupations in the world, incurring more occupational fatalities than any other sector in both the United States and in the European Union. In 2009, the fatal occupational injury rate among construction workers in the United States was nearly three times that for all workers. Falls are one of the most common causes of fatal and non-fatal injuries among construction workers. Proper safety equipment such as harnesses and guardrails and procedures such as securing ladders and inspecting scaffolding can curtail the risk of occupational injuries in the construction industry. Due to the fact that accidents may have disastrous consequences for employees as well as organizations, it is of utmost importance to ensure health and safety of workers and compliance with HSE construction requirements. Health and safety legislation in the construction industry involves many rules and regulations. For example, the role of the Construction Design Management (CDM) Coordinator as a requirement has been aimed at improving health and safety on-site.

The 2010 National Health Interview Survey Occupational Health Supplement (NHIS-OHS) identified work organization factors and occupational psychosocial and chemical/physical exposures which may increase some health risks. Among all U.S. workers in the construction sector, 44% had non-standard work arrangements (were not regular permanent employees) compared to 19% of all U.S. workers, 15% had temporary employment compared to 7% of all U.S. workers, and 55% experienced job insecurity compared to 32% of all U.S. workers. Prevalence rates for exposure to physical/chemical hazards were especially high for the construction sector. Among nonsmoking workers, 24% of construction workers were exposed to secondhand smoke while only 10% of all U.S. workers were exposed. Other physical/chemical hazards with high prevalence rates in the construction industry were frequently working outdoors (73%) and frequent exposure to vapors, gas, dust, or fumes (51%).

Agriculture

Agriculture workers are often at risk of work-related injuries, lung disease, noise-induced hearing loss, skin disease, as well as certain cancers related to chemical use or prolonged sun exposure. On industrialized farms, injuries frequently involve the use of agricultural machinery. The most common cause of fatal agricultural injuries in the United States is tractor rollovers, which can be prevented by the use of roll over protection structures which limit the risk of injury in case a tractor rolls over. Pesticides and other chemicals used in farming can also be hazardous to worker health, and workers exposed to pesticides may experience illnesses or birth defects. As an industry in which families, including children, commonly work alongside their families, agriculture is a common source of occupational injuries and illnesses among younger workers. Common causes of fatal injuries among young farm worker include drowning, machinery and motor vehicle-related accidents.

The 2010 NHIS-OHS found elevated prevalence rates of several occupational exposures in the agriculture, forestry, and fishing sector which may negatively impact health. These workers often worked long hours. The prevalence rate of working more than 48 hours a week among workers employed in these industries was 37%, and 24% worked more than 60 hours a week. Of all workers in these industries, 85% frequently worked outdoors compared to 25% of all U.S. workers. Additionally, 53% were frequently exposed to vapors, gas, dust, or fumes, compared to 25% of all U.S. workers.

Service sector

As the number of service sector jobs has risen in developed countries, more and more jobs have become sedentary, presenting a different array of health problems than those associated with manufacturing and the primary sector. Contemporary problems such as the growing rate of obesity and issues relating to occupational stress, workplace bullying, and overwork in many countries have further complicated the interaction between work and health.

According to data from the 2010 NHIS-OHS, hazardous physical/chemical exposures in the service sector were lower than national averages. On the other hand, potentially harmful work organization characteristics and psychosocial workplace exposures were relatively common in this sector. Among all workers in the service industry, 30% experienced job insecurity in 2010, 27% worked non-standard shifts (not a regular day shift), 21% had non-standard work arrangements (were not regular permanent employees).

Due to the manual labour involved and on a per employee basis, the US Postal Service, UPS and FedEx are the 4th, 5th and 7th most dangerous companies to work for in the US.

Mining and oil & gas extraction.

The mining industry still has one of the highest rates of fatalities of any industry. There are a range of hazards present in surface and underground mining operations. In surface mining, leading hazards include such issues as geological stability, contact with plant and equipment, blasting, thermal environments (heat and cold), respiratory health (Black Lung). In underground mining operations hazards include respiratory health, explosions and gas (particularly in coal mine operations), geological instability, electrical equipment, contact with plant and equipment, heat stress, inrush of bodies of water, falls from height, confined spaces. ionising radiation.

According to data from the 2010 NHIS-OHS, workers employed in mining and oil and gas extraction industries had high prevalence rates of exposure to potentially harmful work organization characteristics and hazardous chemicals.

Many of these workers worked long hours: 50% worked more than 48 hours a week and 25% worked more than 60 hours a week in 2010.

Additionally, 42% worked non-standard shifts (not a regular day shift). These workers also had high prevalence of exposure to physical/chemical hazards. In 2010, 39% had frequent skin contact with chemicals. Among nonsmoking workers, 28% of those in mining and oil and gas extraction industries had frequent exposure to secondhand smoke at work. About two-thirds were frequently exposed to vapors, gas, dust, or fumes at work.

Healthcare and social assistance

Healthcare workers are exposed to many hazards that can adversely affect their health and well-being. Long hours, changing shifts, physically demanding tasks, violence, and exposures to infectious diseases and harmful chemicals are examples of hazards that put these workers at risk for illness and injury.

According to the Bureau of Labor statistics, U.S. hospitals recorded 253,700 work-related injuries and illnesses in 2011, which is 6.8 work-related injuries and illnesses for every 100 full-time employees. The injury and illness rate in hospitals is higher than the rates in construction and manufacturing – two industries that are traditionally thought to be relatively hazardous.

Management systems.

National:

National management system standards for occupational health and safety include AS/NZS 4801-2001 for Australia and New Zealand, CAN/CSA Z1000-14 for Canada and ANSI/ASSE Z10-2012 for the United States. Association Française de Normalisation (AFNOR) in France also developed occupational safety and health management standards. In the United Kingdom, non-departmental public body Health and Safety Executive published Managing for health and safety (MFHS), an online guidance. In Germany, the state factory inspectorates of Bavaria and Saxony had introduced the management system OHRIS. In the Netherlands, the management system Safety Certificate Contractors combines management of occupational health and safety and the environment.

International:

ISO 45001 was published in March 2018. Previously, the International Labour Organization (ILO) published ILO-OSH 2001, also titled « Guidelines on occupational safety and health management systems » to assist organizations with introducing OSH management systems. These guidelines encourage continual improvement in employee health and safety, achieved via a constant process of policy, organization, planning & implementation, evaluation, and action for improvement, all supported by constant auditing to determine the success of OSH actions.

From 1999 to 2018, the occupational health and safety management system standard OHSAS 18001 was adopted as a British and Polish standard and widely used internationally. OHSAS 18000 comprised two parts, OHSAS 18001 and 18002 and was developed by a selection of leading trade bodies, international standards and certification bodies to address a gap where no third-party certifiable international standard existed. It was intended to integrate with ISO 9001 and ISO 14001.

Professional roles and responsibilities.

The roles and responsibilities of OSH professionals vary regionally, but may include evaluating working environments, developing, endorsing and encouraging measures that might prevent injuries and illnesses, providing OSH information to employers, employees, and the public, providing medical examinations, and assessing the success of worker health programs.

Europe

In Norway, the main required tasks of an occupational health and safety practitioner include the following:

Systematic evaluations of the working environment.

Endorsing preventive measures which eliminate causes of illnesses in the workplace.

Providing information on the subject of employees’ health.

Providing information on occupational hygiene, ergonomics, and environmental and safety risks in the workplace.

In the Netherlands, the required tasks for health and safety staff are only summarily defined and include the following:

Providing voluntary medical examinations.

Providing a consulting room on the work environment to the workers.

Providing health assessments (if needed for the job

concerned).

The main influence of the Dutch law on the job of the safety professional is through the requirement on each employer to use the services of a certified working conditions service to advise them on health and safety. A certified service must employ sufficient numbers of four types of certified experts to cover the risks in the organisations which use the service:

A safety professional.

An occupational hygienist.

An occupational physician.

A work and organisation specialist.

In 2004, 37% of health and safety practitioners in Norway and 14% in the Netherlands had an MSc; 44% had a BSc in Norway and 63% in the Netherlands; and 19% had training as an OSH technician in Norway and 23% in the Netherlands.

US

The main tasks undertaken by the OHS practitioner in the US include:

- Develop processes, procedures, criteria, requirements, and methods to attain the best possible management of the hazards and exposures that can cause injury to people, and damage property, or the environment;

- Apply good business practices and economic principles for efficient use of resources to add to the importance of the safety processes;

- Promote other members of the company to contribute by exchanging ideas and other different approaches to make sure that every one in the corporation possess OHS knowledge and have functional roles in the development and execution of safety procedures;

- Assess services, outcomes, methods, equipment, workstations, and procedures by using qualitative and quantitative methods to recognise the hazards and measure the related risks;

- Examine all possibilities, effectiveness, reliability, and expenditure to attain the best results for the company concerned

Knowledge required by the OHS professional in the US include:

- Constitutional and case law controlling safety, health, and the environment

- Operational procedures to plan/develop safe work practices

- Safety, health and environmental sciences

- Design of hazard control systems (i.e. fall protection, scaffoldings)

- Design of recordkeeping systems that take collection into account, as well as storage, interpretation, and dissemination

- Mathematics and statistics

- Processes and systems for attaining safety through design

Some skills required by the OHS professional in the US include (but are not limited to):

- Understanding and relating to systems, policies and rules

- Holding checks and having control methods for possible hazardous exposures

- Mathematical and statistical analysis

- Examining manufacturing hazards

- Planning safe work practices for systems, facilities, and equipment

- Understanding and using safety, health, and environmental science information for the improvement of procedures

- Interpersonal communication skills

Differences between countries and regions.

Because different countries take different approaches to ensuring occupational safety and health, areas of OSH need and focus also vary between countries and regions. Similar to the findings of the ENHSPO survey conducted in Australia, the Institute of Occupational Medicine in the UK found that there is a need to put greater emphasis on work-related illness in the UK. In contrast, in Australia and the US, a major responsibility of the OHS professional is to keep company directors and managers aware of the issues that they face in regards to occupational health and safety principles and legislation.

However, in some other areas of Europe, it is precisely this which has been lacking: “Nearly half of senior managers and company directors do not have an up-to-date understanding of their health and safety-related duties and responsibilities.

Identifying safety and health hazards

Hazards, risks, outcomes

The terminology used in OSH varies between countries, but generally speaking:

- A hazard is something that can cause harm if not controlled.

- The outcome is the harm that results from an uncontrolled hazard.

- A risk is a combination of the probability that a particular outcome will occur and the severity of the harm involved.

“Hazard”, “risk”, and “outcome” are used in other fields to describe e.g. environmental damage, or damage to equipment. However, in the context of OSH, “harm” generally describes the direct or indirect degradation, temporary or permanent, of the physical, mental, or social well-being of workers. For example, repetitively carrying out manual handling of heavy objects is a hazard. The outcome could be a musculoskeletal disorder (MSD) or an acute back or joint injury. The risk can be expressed numerically (e.g. a 0.5 or 50/50 chance of the outcome occurring during a year), in relative terms (e.g. « high/medium/low »), or with a multi-dimensional classification scheme (e.g. situation-specific risks).

Hazard identification

Hazard identification or assessment is an important step in the overall risk assessment and risk management process. It is where individual work hazards are identified, assessed and controlled/eliminated as close to source (location of the hazard) as reasonably as possible. As technology, resources, social expectation or regulatory requirements change, hazard analysis focuses controls more closely toward the source of the hazard. Thus hazard control is a dynamic program of prevention. Hazard based programs also have the advantage of not assigning or implying there are « acceptable risks » in the workplace. A hazard-based program may not be able to eliminate all risks, but neither does it accept « satisfactory » – but still risky – outcomes. And as those who calculate and manage the risk are usually managers while those exposed to the risks are a different group, workers, a hazard-based approach can by-pass conflict inherent in a risk-based approach.

The information that needs to be gathered from sources should apply to the specific type of work from which the hazards can come from. As mentioned previously, examples of these sources include interviews with people who have worked in the field of the hazard, history and analysis of past incidents, and official reports of work and the hazards encountered. Of these, the personnel interviews may be the most critical in identifying undocumented practices, events, releases, hazards and other relevant information. Once the information is gathered from a collection of sources, it is recommended for these to be digitally archived (to allow for quick searching) and to have a physical set of the same information in order for it to be more accessible. One innovative way to display the complex historical hazard information is with a historical hazards identification map, which distills the hazard information into an easy to use graphical format.

Risk assessment

Modern occupational safety and health legislation usually demands that a risk assessment be carried out prior to making an intervention. It should be kept in mind that risk management requires risk to be managed to a level which is as low as is reasonably practical.

This assessment should:

- Identify the hazards

- Identify all affected by the hazard and how

- Evaluate the risk

- Identify and prioritize appropriate control measures

The calculation of risk is based on the likelihood or probability of the harm being realized and the severity of the consequences. This can be expressed mathematically as a quantitative assessment (by assigning low, medium and high likelihood and severity with integers and multiplying them to obtain a risk factor), or qualitatively as a description of the circumstances by which the harm could arise.

The assessment should be recorded and reviewed periodically and whenever there is a significant change to work practices. The assessment should include practical recommendations to control the risk. Once recommended controls are implemented, the risk should be re-calculated to determine if it has been lowered to an acceptable level. Generally speaking, newly introduced controls should lower risk by one level, i.e., from high to medium or from medium to low.

Contemporary developments.

On an international scale, the World Health Organization (WHO) and the International Labour Organization (ILO) have begun focusing on labour environments in developing nations with projects such as Healthy Cities. Many of these developing countries are stuck in a situation in which their relative lack of resources to invest in OSH leads to increased costs due to work-related illnesses and accidents. The European Agency for Safety and Health at Work states indicates that nations having less developed OSH systems spend a higher fraction of their gross national product on job related injuries and illness – taking resources away from more productive activities. The ILO estimates that work-related illness and accidents cost up to 10% of GDP in Latin America, compared with just 2.6% to 3.8% in the EU. There is continued use of asbestos, a notorious hazard, in some developing countries. So asbestos-related disease is, sadly, expected to continue to be a significant problem well into the future.

Augmented Reality, Virtual Reality and Artificial intelligence.

The impact of technologies on health and safety is an emerging field of research and practice. The opportunities for improving health and safety through use of augmented reality (AR) are now endless. There is emerging technologies for which the range of health and safety implications are not well understood and the impacts of machine learning and its impact on worker health and safety and the health and safety profession is still emerging. New technologies and ways of working introduce new risks and challenges for WHS and workers’ compensation, but they also have the potential to make work safer and reduce workplace injury.

Nanotechnology.

Nanotechnology is an example of a new, relatively unstudied technology. A Swiss survey of one hundred thirty eight companies using or producing nanoparticulate matter in 2006 resulted in forty completed questionnaires. Sixty five per cent of respondent companies stated they did not have a formal risk assessment process for dealing with nanoparticulate matter.

Nanotechnology already presents new issues for OSH professionals that will only become more difficult as nanostructures become more complex.

The size of the particles renders most containment and personal protective equipment ineffective. The toxicology values for macro sized industrial substances are rendered inaccurate due to the unique nature of nanoparticulate matter.

As nanoparticulate matter decreases in size its relative surface area increases dramatically, increasing any catalytic effect or chemical reactivity substantially versus the known value for the macro substance. This presents a new set of challenges in the near future to rethink contemporary measures to safeguard the health and welfare of employees against a nanoparticulate substance that most conventional controls have not been designed to manage.

Occupational Health Disparities.

Occupational health disparities refer to differences in occupational injuries and illnesses that are closely linked with demographic, social, cultural, economic, and/or political factors.

Education

There are multiple levels of training applicable to the field of occupational safety and health (OSH). Programs range from individual non-credit certificates, focusing on specific areas of concern, to full doctoral programs. The University of Southern California was one of the first schools in the US to offer a Ph.D. program focusing on the field. Further, multiple master’s degree programs exist, such as that of the Indiana State University who offer a master of science (MS) and a master of arts (MA) in OSH. Graduate programs are designed to train educators, as well as, high-level practitioners.

Many OSH generalists focus on undergraduate studies; programs within schools, such as that of the University of North Carolina’s online Bachelor of Science in Environmental Health and Safety, fill a large majority of hygienist needs. However, smaller companies often do not have full-time safety specialists on staff, thus, they appoint a current employee to the responsibility. Individuals finding themselves in positions such as these, or for those enhancing marketability in the job-search and promotion arena, may seek out a credit certificate program. For example, the University of Connecticut’s online OSH Certificate,[provides students familiarity with overarching concepts through a 15-credit (5-course) program. Programs such as these are often adequate tools in building a strong educational platform for new safety managers with a minimal outlay of time and money. Further, most hygienists seek certification by organizations which train in specific areas of concentration, focusing on isolated workplace hazards. The American Society for Safety Engineers (ASSE), American Board of Industrial Hygiene (ABIH), and American Industrial Hygiene Association (AIHA) offer individual certificates on many different subjects from forklift operation to waste disposal and are the chief facilitators of continuing education in the OSH sector. In the U.S. the training of safety professionals is supported by National Institute for Occupational Safety and Health through their NIOSH Education and Research Centers. In Australia, training in OSH is available at the vocational education and training level, and at university undergraduate and postgraduate level. Such university courses may be accredited by an Accreditation Board of the Safety Institute of Australia. The Institute has produced a Body of Knowledge which it considers is required by a generalist safety and health professional, and offers a professional qualification based on a four step assessment.

World Day for Safety and Health at Work

On April 28 The International Labour Organization celebrates « World Day for Safety and Health » to raise awareness of safety in the workplace. Occurring annually since 2003, each year it focuses on a specific area and bases a campaign around the theme.

FIRE

Fire is the rapid oxidation of a material in the exothermic chemical process of combustion, releasing heat, light, and various reaction products. Slower oxidative processes like rusting or digestion are not included by this definition.

Fire is hot because the conversion of the weak double bond in molecular oxygen, O2, to the stronger bonds in the combustion products carbon dioxide and water releases energy (418 kJ per 32 g of O2); the bond energies of the fuel play only a minor role here. At a certain point in the combustion reaction, called the ignition point, flames are produced. The flame is the visible portion of the fire. Flames consist primarily of carbon dioxide, water vapor, oxygen and nitrogen. If hot enough, the gases may become ionized to produce plasma. Depending on the substances alight, and any impurities outside, the color of the flame and the fire’s intensity will be different.

Fire in its most common form can result in conflagration, which has the potential to cause physical damage through burning. Fire is an important process that affects ecological systems around the globe. The positive effects of fire include stimulating growth and maintaining various ecological systems.

The negative effects of fire include hazard to life and property, atmospheric pollution, and water contamination. If fire removes protective vegetation, heavy rainfall may lead to an increase in soil erosion by water. Also, when vegetation is burned, the nitrogen it contains is released into the atmosphere, unlike elements such as potassium and phosphorus which remain in the ash and are quickly recycled into the soil. This loss of nitrogen caused by a fire produces a long-term reduction in the fertility of the soil, but this fecundity can potentially be recovered as molecular nitrogen in the atmosphere is « fixed » and converted to ammonia by natural phenomena such as lightning and by leguminous plants that are « nitrogen-fixing » such as clover, peas, and green beans.

Fire has been used by humans in rituals, in agriculture for clearing land, for cooking, generating heat and light, for signaling, propulsion purposes, smelting, forging, incineration of waste, cremation, and as a weapon or mode of destruction.

Chemistry

Fires start when a flammable or a combustible material, in combination with a sufficient quantity of an oxidizer such as oxygen gas or another oxygen-rich compound (though non-oxygen oxidizers exist), is exposed to a source of heat or ambient temperature above the flash point for the fuel/oxidizer mix, and is able to sustain a rate of rapid oxidation that produces a chain reaction. This is commonly called the fire tetrahedron. Fire cannot exist without all of these elements in place and in the right proportions. For example, a flammable liquid will start burning only if the fuel and oxygen are in the right proportions. Some fuel-oxygen mixes may require a catalyst, a substance that is not consumed, when added, in any chemical reaction during combustion, but which enables the reactants to combust more readily.

Once ignited, a chain reaction must take place whereby fires can sustain their own heat by the further release of heat energy in the process of combustion and may propagate, provided there is a continuous supply of an oxidizer and fuel.

If the oxidizer is oxygen from the surrounding air, the presence of a force of gravity, or of some similar force caused by acceleration, is necessary to produce convection, which removes combustion products and brings a supply of oxygen to the fire. Without gravity, a fire rapidly surrounds itself with its own combustion products and non-oxidizing gases from the air, which exclude oxygen and extinguish the fire. Because of this, the risk of fire in a spacecraft is small when it is coasting in inertial flight. This does not apply if oxygen is supplied to the fire by some process other than thermal convection.

Fire can be extinguished by removing any one of the elements of the fire tetrahedron. Consider a natural gas flame, such as from a stove-top burner. The fire can be extinguished by any of the following:

- turning off the gas supply, which removes the fuel source;

- covering the flame completely, which smothers the flame as the combustion both uses the available oxidizer (the oxygen in the air) and displaces it from the area around the flame with CO2;

- application of water, which removes heat from the fire faster than the fire can produce it (similarly, blowing hard on a flame will displace the heat of the currently burning gas from its fuel source, to the same end), or

- application of a retardant chemical such as Halon to the flame, which retards the chemical reaction itself until the rate of combustion is too slow to maintain the chain reaction.

In contrast, fire is intensified by increasing the overall rate of combustion. Methods to do this include balancing the input of fuel and oxidizer to stoichiometric proportions, increasing fuel and oxidizer input in this balanced mix, increasing the ambient temperature so the fire’s own heat is better able to sustain combustion, or providing a catalyst, a non-reactant medium in which the fuel and oxidizer can more readily react.

Flame.

A flame is a mixture of reacting gases and solids emitting visible, infrared, and sometimes ultraviolet light, the frequency spectrum of which depends on the chemical composition of the burning material and intermediate reaction products. In many cases, such as the burning of organic matter, for example wood, or the incomplete combustion of gas, incandescent solid particles called soot produce the familiar red-orange glow of « fire ».

This light has a continuous spectrum. Complete combustion of gas has a dim blue color due to the emission of single wavelength radiation from various electron transitions in the excited molecules formed in the flame. Usually oxygen is involved, but hydrogen burning in chlorine also produces a flame, producing hydrogen chloride (HCl). Other possible combinations producing flames, amongst many, are fluorine and hydrogen, and hydrazine and nitrogen tetroxide. Hydrogen and hydrazine/UDMH flames are similarly pale blue, while burning boron and its compounds, evaluated in mid-20th century as a high energy fuel for jet and rocket engines, emits intense green flame, leading to its informal nickname of « Green Dragon ».

The glow of a flame is complex. Black-body radiation is emitted from soot, gas, and fuel particles, though the soot particles are too small to behave like perfect blackbodies. There is also photon emission by de-excited atoms and molecules in the gases. Much of the radiation is emitted in the visible and infrared bands. The color depends on temperature for the black-body radiation, and on chemical makeup for the emission spectra. The dominant color in a flame changes with temperature. The photo of the forest fire in Canada is an excellent example of this variation. Near the ground, where most burning is occurring, the fire is white, the hottest color possible for organic material in general, or yellow. Above the yellow region, the color changes to orange, which is cooler, then red, which is cooler still. Above the red region, combustion no longer occurs, and the uncombusted carbon particles are visible as black smoke.

The common distribution of a flame under normal gravity conditions depends on convection, as soot tends to rise to the top of a general flame, as in a candle in normal gravity conditions, making it yellow. In micro gravity or zero gravity, such as an environment in outer space, convection no longer occurs, and the flame becomes spherical, with a tendency to become more blue and more efficient (although it may go out if not moved steadily, as the CO2 from combustion does not disperse as readily in micro gravity, and tends to smother the flame).

There are several possible explanations for this difference, of which the most likely is that the temperature is sufficiently evenly distributed that soot is not formed and complete combustion occurs. Experiments by NASA reveal that diffusion flames in micro gravity allow more soot to be completely oxidized after they are produced than diffusion flames on Earth, because of a series of mechanisms that behave differently in micro gravity when compared to normal gravity conditions. These discoveries have potential applications in applied science and industry, especially concerning fuel efficiency.

In combustion engines, various steps are taken to eliminate a flame. The method depends mainly on whether the fuel is oil, wood, or a high-energy fuel such as jet fuel.

Flame temperatures

Temperatures of flames by appearance

It is true that objects at specific temperatures do radiate visible light. Objects whose surface is at a temperature above approximately 470 °C (878 °F) will glow, emitting light at a color that indicates the temperature of that surface. See the section on red heat for more about this effect. It is a misconception that one can judge the temperature of a fire by the color of its flames or the sparks in the flames. For many reasons, chemically and optically, these colors may not match the red/orange/yellow/white heat temperatures on the chart. Barium nitrate burns a bright green, for instance, and this is not present on the heat chart.

Typical temperatures of flames

The « adiabatic flame temperature » of a given fuel and oxidizer pair indicates the temperature at which the gases achieve stable combustion.

- Oxy–dicyanoacetylene 4,990 °C (9,000 °F)

- Oxy–acetylene 3,480 °C (6,300 °F)

- Oxyhydrogen 2,800 °C (5,100 °F)

- Air–acetylene 2,534 °C (4,600 °F)

- Blowtorch (air–MAPP gas) 2,200 °C (4,000 °F)

- Bunsen burner (air–natural gas) 1,300 to 1,600 °C (2,400 to 2,900 °F)

- Candle (air–paraffin) 1,000 °C (1,800 °F)

- Smoldering cigarette:

- Temperature without drawing: side of the lit portion; 400 °C (750 °F); middle of the lit portion: 585 °C (1,100 °F)

- Temperature during drawing: middle of the lit portion: 700 °C (1,300 °F)

- Always hotter in the middle.

Fire ecology

Every natural ecosystem has its own fire regime, and the organisms in those ecosystems are adapted to or dependent upon that fire regime. Fire creates a mosaic of different habitat patches, each at a different stage of succession. Different species of plants, animals, and microbes specialize in exploiting a particular stage, and by creating these different types of patches, fire allows a greater number of species to exist within a landscape.

Fossil record

The fossil record of fire first appears with the establishment of a land-based flora in the Middle Ordovician period, 470 million years ago, permitting the accumulation of oxygen in the atmosphere as never before, as the new hordes of land plants pumped it out as a waste product. When this concentration rose above 13%, it permitted the possibility of wildfire.Wildfire is first recorded in the Late Silurian fossil record, 420 million years ago, by fossils of charcoalified plants. Apart from a controversial gap in the Late Devonian, charcoal is present ever since. The level of atmospheric oxygen is closely related to the prevalence of charcoal: clearly oxygen is the key factor in the abundance of wildfire. Fire also became more abundant when grasses radiated and became the dominant component of many ecosystems, around 6 to 7 million years ago; this kindling provided tinder which allowed for the more rapid spread of fire. These widespread fires may have initiated a positive feedback process, whereby they produced a warmer, drier climate more conducive to fire.

Human control

The ability to control fire was a dramatic change in the habits of early humans. Making fire to generate heat and light made it possible for people to cook food, simultaneously increasing the variety and availability of nutrients and reducing disease by killing organisms in the food. The heat produced would also help people stay warm in cold weather, enabling them to live in cooler climates. Fire also kept nocturnal predators at bay. Evidence of cooked food is found from 1.9 million years ago, although fire was probably not used in a controlled fashion until 400,000 years ago. There is some evidence that fire may have been used in a controlled fashion about 1 million years ago. Evidence becomes widespread around 50 to 100 thousand years ago, suggesting regular use from this time; interestingly, resistance to air pollution started to evolve in human populations at a similar point in time. The use of fire became progressively more sophisticated, with it being used to create charcoal and to control wildlife from ‘tens of thousands’ of years ago.

Fire has also been used for centuries as a method of torture and execution, as evidenced by death by burning as well as torture devices such as the iron boot, which could be filled with water, oil, or even lead and then heated over an open fire to the agony of the wearer.

By the Neolithic Revolution, during the introduction of grain-based agriculture, people all over the world used fire as a tool in landscape management. These fires were typically controlled burns or « cool fires », as opposed to uncontrolled « hot fires », which damage the soil. Hot fires destroy plants and animals, and endanger communities. This is especially a problem in the forests of today where traditional burning is prevented in order to encourage the growth of timber crops. Cool fires are generally conducted in the spring and autumn. They clear undergrowth, burning up biomass that could trigger a hot fire should it get too dense. They provide a greater variety of environments, which encourages game and plant diversity. For humans, they make dense, impassable forests traversable. Another human use for fire in regards to landscape management is its use to clear land for agriculture. Slash-and-burn agriculture is still common across much of tropical Africa, Asia and South America. « For small farmers, it is a convenient way to clear overgrown areas and release nutrients from standing vegetation back into the soil », said Miguel Pinedo-Vasquez, an ecologist at the Earth Institute’s Center for Environmental Research and Conservation. However this useful strategy is also problematic. Growing population, fragmentation of forests and warming climate are making the earth’s surface more prone to ever-larger escaped fires. These harm ecosystems and human infrastructure, cause health problems, and send up spirals of carbon and soot that may encourage even more warming of the atmosphere – and thus feed back into more fires. Globally today, as much as 5 million square kilometres – an area more than half the size of the United States – burns in a given year.

There are numerous modern applications of fire. In its broadest sense, fire is used by nearly every human being on earth in a controlled setting every day. Users of internal combustion vehicles employ fire every time they drive. Thermal power stations provide electricity for a large percentage of humanity.

The use of fire in warfare has a long history. Fire was the basis of all early thermal weapons. Homer detailed the use of fire by Greek soldiers who hid in a wooden horse to burn Troy during the Trojan war. Later the Byzantine fleet used Greek fire to attack ships and men. In the First World War, the first modern flamethrowers were used by infantry, and were successfully mounted on armoured vehicles in the Second World War. In the latter war, incendiary bombs were used by Axis and Allies alike, notably on Tokyo, Rotterdam, London, Hamburg and, notoriously, at Dresden; in the latter two cases firestorms were deliberately caused in which a ring of fire surrounding each city was drawn inward by an updraft caused by a central cluster of fires. The United States Army Air Force also extensively used incendiaries against Japanese targets in the latter months of the war, devastating entire cities constructed primarily of wood and paper houses. The use of napalm was employed in July 1944, towards the end of the Second World War; although its use did not gain public attention until the Vietnam War. Molotov cocktails were also used.

Use as fuel

Setting fuel aflame releases usable energy. Wood was a prehistoric fuel, and is still viable today. The use of fossil fuels, such as petroleum, natural gas, and coal, in power plants supplies the vast majority of the world’s electricity today; the International Energy Agency states that nearly 80% of the world’s power came from these sources in 2002. The fire in a power station is used to heat water, creating steam that drives turbines. The turbines then spin an electric generator to produce electricity. Fire is also used to provide mechanical work directly, in both external and internal combustion engines.

The unburnable solid remains of a combustible material left after a fire is called clinker if its melting point is below the flame temperature, so that it fuses and then solidifies as it cools, and ash if its melting point is above the flame temperature.

Protection and prevention

Wildfire prevention programs around the world may employ techniques such as wildland fire use and prescribed or controlled burns. Wildland fire use refers to any fire of natural causes that is monitored but allowed to burn. Controlled burns are fires ignited by government agencies under less dangerous weather conditions.

Fire fighting services are provided in most developed areas to extinguish or contain uncontrolled fires. Trained firefighters use fire apparatus, water supply resources such as water mains and fire hydrants or they might use A and B class foam depending on what is feeding the fire.

Fire prevention is intended to reduce sources of ignition. Fire prevention also includes education to teach people how to avoid causing fires. Buildings, especially schools and tall buildings, often conduct fire drills to inform and prepare citizens on how to react to a building fire. Purposely starting destructive fires constitutes arson and is a crime in most jurisdictions.

Model building codes require passive fire protection and active fire protection systems to minimize damage resulting from a fire. The most common form of active fire protection is fire sprinklers. To maximize passive fire protection of buildings, building materials and furnishings in most developed countries are tested for fire-resistance, combustibility and flammability. Upholstery, carpeting and plastics used in vehicles and vessels are also tested.

Where fire prevention and fire protection have failed to prevent damage, fire insurance can mitigate the financial impact.

Restoration

Different restoration methods and measures are used depending on the type of fire damage that occurred. Restoration after fire damage can be performed by property management teams, building maintenance personnel, or by the homeowners themselves; however, contacting a certified professional fire damage restoration specialist is often regarded as the safest way to restore fire damaged property due to their training and extensive experience. Most are usually listed under « Fire and Water Restoration » and they can help speed repairs, whether for individual homeowners or for the largest of institutions.

Fire and Water Restoration companies are regulated by the appropriate state’s Department of Consumer Affairs – usually the state contractors license board. In California, all Fire and Water Restoration companies must register with the California Contractors State License Board. Presently, the California Contractors State License Board has no specific classification for « water and fire damage restoration. » Hence, the Contractor’s State License Board requires both an asbestos certification (ASB) as well as a demolition classification (C-21) in order to perform Fire and Water Restoration work.

Explosion

An explosion is a rapid increase in volume and release of energy in an extreme manner, usually with the generation of high temperatures and the release of gases. Supersonic explosions created by high explosives are known as detonations and travel via supersonic shock waves. Subsonic explosions are created by low explosives through a slower burning process known as deflagration.

Causes

Natural

Explosions can occur in nature due to a large influx of energy. Most natural explosions arise from volcanic or stellar processes of various sorts. Explosive volcanic eruptions occur when magma rising from below has much dissolved gas in it; the reduction of pressure as the magma rises causes the gas to bubble out of solution, resulting in a rapid increase in volume. Explosions also occur as a result of impact events and in phenomena such as hydrothermal explosions (also due to volcanic processes). Explosions can also occur outside of Earth in the universe in events such as supernova. Explosions frequently occur during bushfires in eucalyptus forests where the volatile oils in the tree tops suddenly combust.

Astronomical

Among the largest known explosions in the universe are supernovae, which results when a star explodes from the sudden starting or stopping of nuclear fusion gamma-ray bursts, whose nature is still in some dispute. Solar flares are an example of a common explosion on the Sun, and presumably on most other stars as well. The energy source for solar flare activity comes from the tangling of magnetic field lines resulting from the rotation of the Sun’s conductive plasma. Another type of large astronomical explosion occurs when a very large meteoroid or an asteroid impacts the surface of another object, such as a planet.

Chemical

The most common artificial explosives are chemical explosives, usually involving a rapid and violent oxidation reaction that produces large amounts of hot gas. Gunpowder was the first explosive to be discovered and put to use. Other notable early developments in chemical explosive technology were Frederick Augustus Abel’s development of nitrocellulose in 1865 and Alfred Nobel’s invention of dynamite in 1866. Chemical explosions (both intentional and accidental) are often initiated by an electric spark or flame in the presence of Oxygen. Accidental explosions may occur in fuel tanks, rocket engines, etc.

Electrical and magnetic

A high current electrical fault can create an ‘electrical explosion’ by forming a high energy electrical arc which rapidly vaporizes metal and insulation material. This arc flash hazard is a danger to persons working on energized switchgear. Also, excessive magnetic pressure within an ultra-strong electromagnet can cause a magnetic explosion.

Mechanical and vapor

Strictly a physical process, as opposed to chemical or nuclear, e.g., the bursting of a sealed or partially sealed container under internal pressure is often referred to as an explosion. Examples include an overheated boiler or a simple tin can of beans tossed into a fire.

Boiling liquid expanding vapor explosions are one type of mechanical explosion that can occur when a vessel containing a pressurized liquid is ruptured, causing a rapid increase in volume as the liquid evaporates. Note that the contents of the container may cause a subsequent chemical explosion, the effects of which can be dramatically more serious, such as a propane tank in the midst of a fire. In such a case, to the effects of the mechanical explosion when the tank fails are added the effects from the explosion resulting from the released (initially liquid and then almost instantaneously gaseous) propane in the presence of an ignition source. For this reason, emergency workers often differentiate between the two events.

Nuclear

In addition to stellar nuclear explosions, a man-made nuclear weapon is a type of explosive weapon that derives its destructive force from nuclear fission or from a combination of fission and fusion. As a result, even a nuclear weapon with a small yield is significantly more powerful than the largest conventional explosives available, with a single weapon capable of completely destroying an entire city.

Properties of explosions

Force

Explosive force is released in a direction perpendicular to the surface of the explosive. If a grenade is in mid air during the explosion, the direction of the blast will be 360°. In contrast, in a shaped charge the explosive forces are focused to produce a greater local effect.

Velocity

The speed of the reaction is what distinguishes an explosive reaction from an ordinary combustion reaction. Unless the reaction occurs very rapidly, the thermally expanding gases will be moderately dissipated in the medium, with no large differential in pressure and there will be no explosion. Consider a wood fire. As the fire burns, there certainly is the evolution of heat and the formation of gases, but neither is liberated rapidly enough to build up a sudden substantial pressure differential and then cause an explosion. This can be likened to the difference between the energy discharge of a battery, which is slow, and that of a flash capacitor like that in a camera flash, which releases its energy all at once.

Evolution of heat

The generation of heat in large quantities accompanies most explosive chemical reactions. The exceptions are called entropic explosives and include organic peroxides such as acetone peroxide. It is the rapid liberation of heat that causes the gaseous products of most explosive reactions to expand and generate high pressures. This rapid generation of high pressures of the released gas constitutes the explosion. The liberation of heat with insufficient rapidity will not cause an explosion. For example, although a unit mass of coal yields five times as much heat as a unit mass of nitroglycerin, the coal cannot be used as an explosive (except in the form of coal dust) because the rate at which it yields this heat is quite slow. In fact, a substance which burns less rapidly (i.e. slow combustion) may actually evolve more total heat than an explosive which detonates rapidly (i.e. fast combustion). In the former, slow combustion converts more of the internal energy (i.e. chemical potential) of the burning substance into heat released to the surroundings, while in the latter, fast combustion (i.e. detonation) instead converts more internal energy into work on the surroundings (i.e. less internal energy converted into heat); c.f. heat and work (thermodynamics) are equivalent forms of energy. See Heat of Combustion for a more thorough treatment of this topic.

When a chemical compound is formed from its constituents, heat may either be absorbed or released. The quantity of heat absorbed or given off during transformation is called the heat of formation. Heats of formations for solids and gases found in explosive reactions have been determined for a temperature of 25 °C and atmospheric pressure, and are normally given in units of kilojoules per gram-molecule. A positive value indicates that heat is absorbed during the formation of the compound from its elements; such a reaction is called an endothermic reaction. In explosive technology only materials that are exothermic—that have a net liberation of heat and have a negative heat of formation—are of interest. Reaction heat is measured under conditions either of constant pressure or constant volume. It is this heat of reaction that may be properly expressed as the « heat of explosion. »

Initiation of reaction

A chemical explosive is a compound or mixture which, upon the application of heat or shock, decomposes or rearranges with extreme rapidity, yielding much gas and heat. Many substances not ordinarily classed as explosives may do one, or even two, of these things.

A reaction must be capable of being initiated by the application of shock, heat, or a catalyst (in the case of some explosive chemical reactions) to a small portion of the mass of the explosive material. A material in which the first three factors exist cannot be accepted as an explosive unless the reaction can be made to occur when needed.

Fragmentation

Combustion is the accumulation and projection of particles as the result of a high explosives detonation. Fragments could be part of a structure such as a magazine. High velocity, low angle fragments can travel hundreds or thousands of feet with enough energy to initiate other surrounding high explosive items, injure or kill personnel and damage vehicles or structures.

Personal protective equipment (PPE)

Personal protective equipment (PPE) is protective clothing, helmets, goggles, or other garments or equipment designed to protect the wearer’s body from injury or infection. The hazards addressed by protective equipment include physical, electrical, heat, chemicals, biohazards, and airborne particulate matter. Protective equipment may be worn for job-related occupational safety and health purposes, as well as for sports and other recreational activities. « Protective clothing » is applied to traditional categories of clothing, and « protective gear » applies to items such as pads, guards, shields, or masks, and others.

The purpose of personal protective equipment is to reduce employee exposure to hazards when engineering controls and administrative controls are not feasible or effective to reduce these risks to acceptable levels. PPE is needed when there are hazards present. PPE has the serious limitation that it does not eliminate the hazard at the source and may result in employees being exposed to the hazard if the equipment fails.

Any item of PPE imposes a barrier between the wearer/user and the working environment. This can create additional strains on the wearer; impair their ability to carry out their work and create significant levels of discomfort. Any of these can discourage wearers from using PPE correctly, therefore placing them at risk of injury, ill-health or, under extreme circumstances, death. Good ergonomic design can help to minimise these barriers and can therefore help to ensure safe and healthy working conditions through the correct use of PPE.